AI for Financial Services: Use Cases, Compliance, and Implementation

Financial services organizations face a unique challenge: they must innovate faster than their competitors while operating under some of the world's strictest regulations. AI for financial services isn't a luxury—it's becoming essential to compete. But deploying AI in banking, insurance, and capital markets requires far more than just buying software.

This article explores how leading financial institutions are using AI to unlock fraud detection, improve credit decisions, streamline claims processing, and navigate EU compliance frameworks. We'll cover the use cases that matter most, the regulatory landscape you need to understand, and a practical implementation roadmap.

For a comprehensive overview of AI strategy in financial services, see our AI for Finance Pillar.

What Is AI for Financial Services?

AI in financial services refers to the use of machine learning, natural language processing, and large language models to automate, augment, or optimize financial processes. This includes everything from detecting fraudulent transactions in milliseconds to underwriting insurance policies at scale, or analyzing market sentiment for algorithmic trading.

The distinction matters: AI for financial services isn't generic enterprise AI. It must handle:

- Regulatory compliance (EU AI Act, GDPR, CCPA, PSD2, SOX)

- High-stakes decisions (credit, lending, claims denial)

- Explainability requirements (regulators demand to know why an AI rejected a customer)

- Data sensitivity (financial records are GDPR-protected personal data)

- Operational resilience (downtime costs money per second)

Because of these constraints, financial institutions adopt AI more cautiously than tech companies—but when they do, the ROI is typically 3–5x higher.

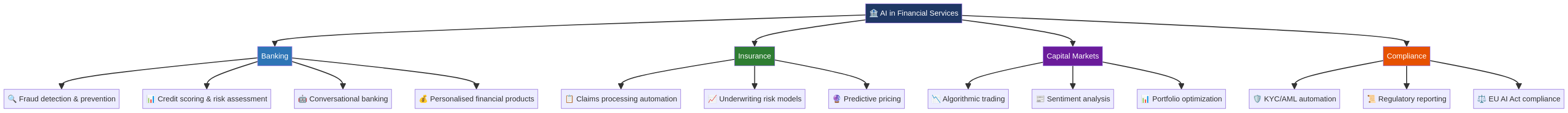

AI Use Cases Across Financial Services

The four pillars of AI deployment in financial services: Banking, Insurance, Capital Markets, and Compliance.

Banking: Personalization, Fraud, and Credit

Fraud Detection & Prevention

Banks process billions of transactions daily. Manual review is impossible. AI models trained on transaction patterns, merchant behavior, and customer history can flag suspicious activity in real-time—often before the fraudster finishes the transaction.

Leading European banks report 30–40% fewer fraudulent transactions after deploying AI fraud models, while false positive rates drop significantly compared to rule-based systems. The model learns your spending habits and flags anomalies: a sudden £5,000 transfer to an unknown account at 3 AM is clearly worth investigating.

Credit Scoring & Underwriting

Traditional credit scoring relies on static factors: income, employment, existing debt. AI models incorporate thousands of signals: transaction patterns, payment history, even behavioral data. The result is faster decisions (minutes vs. weeks) and credit access for previously "unbanked" segments.

However, regulators now scrutinize AI lending models for bias. A model trained on historical lending data may perpetuate discrimination against protected groups. This is why explainable AI (XAI) is essential: the model must explain why someone was approved or denied.

Conversational Banking & Personalization

Large language models (LLMs) power chatbots that understand customer intent in natural language. Instead of pressing menu options, a customer can ask: "What's my cashback on groceries this month?" The LLM retrieves relevant account data, understands the question, and responds naturally.

Larger banks are moving beyond chatbots to AI-powered personal financial advisors—systems that analyze a customer's spending, savings goals, and risk tolerance, then recommend products and investment adjustments tailored to that individual.

Insurance: Claims, Underwriting, and Pricing

Claims Processing Automation

Insurance claims are manual, slow, and error-prone. Customers submit documents (photos, medical reports, receipts), and adjudicators spend hours reviewing. AI models trained on thousands of claims can:

- Classify claim type (auto, health, property) automatically

- Extract key details (date of loss, coverage type, claimant info) from unstructured documents

- Detect fraud patterns (simultaneous claims, inflated amounts, staged incidents)

- Route to appropriate adjudicator or auto-approve if low-risk

A mid-sized insurer automating claims processing reported 40% faster settlement times and 25% fewer fraudulent claims.

Risk Assessment & Underwriting

Insurance underwriting is risk assessment: how likely is a claim, and how much premium should we charge? AI models trained on claims history, policyholder data, and external risk factors (weather patterns, IoT sensor data from homes/cars) can price risk more accurately than actuarial tables alone.

Parametric insurance—payouts triggered automatically when objective conditions are met (e.g., if a hurricane reaches Category 4)—depends entirely on AI to model probabilities and set thresholds.

Predictive Pricing

AI enables dynamic, personalized pricing. Insurers can segment customers by risk profile and adjust premiums accordingly. A driver with clean history and good vehicle telematics pays less than an identical peer with previous claims—even if both have the same age and location.

Capital Markets: Trading, Analytics, and Risk

Algorithmic Trading & Sentiment Analysis

Hedge funds and asset managers use AI to analyze market microstructure: trade execution patterns, order flow imbalances, and market sentiment (extracted from news, social media, earnings calls). LLMs parse thousands of news articles per second, detecting market-moving events faster than human traders.

This remains profitable only for firms with significant capital and compute, but AI has lowered the barrier to entry for mid-sized traders.

Portfolio Optimization

AI models can rebalance portfolios dynamically based on market conditions, correlation shifts, and risk constraints. Instead of quarterly rebalancing, AI manages continuous optimization—reducing portfolio drift and improving risk-adjusted returns.

Counterparty Risk & Stress Testing

Banks must stress-test their portfolios against hypothetical crises: interest rate shocks, credit events, geopolitical disruptions. AI simulations run thousands of scenarios and identify tail risks that static models miss.

Compliance: Automation, Monitoring, and Audit

This is where regulation meets AI most directly. Financial institutions must comply with dozens of frameworks, and regulators increasingly scrutinize AI use within compliance itself.

KYC/AML Automation

Know Your Customer (KYC) and Anti-Money Laundering (AML) are labor-intensive: verify customer identity, check sanctions lists, assess risk, monitor for suspicious activity. AI accelerates this:

- Identity verification: Computer vision models verify government-issued ID documents automatically

- Risk scoring: Models assess customer risk based on profile, transaction behavior, and external lists

- Transaction monitoring: Flags suspicious patterns (structuring, rapid transfers to high-risk jurisdictions)

- Beneficial ownership: NLP parses corporate documents to identify true beneficial owners

The EU's updated AML Directive (2024) requires stronger AML AI governance, but also recognizes AI as essential to meet compliance burden.

Regulatory Reporting

Banks file dozens of regulatory reports (Basel III, PSD2, MiFID II, EMIR). These require extracting data, performing calculations, formatting according to regulatory schemas. AI can automate template creation and validation, reducing manual errors.

EU AI Act Compliance

This is the critical shift. The EU AI Act (effective 2025–2026) classifies AI systems by risk level. High-risk AI in financial services (fraud detection, creditworthiness assessment, insurance pricing) requires:

- Documentation of training data, model architecture, and testing

- Bias and fairness audits

- Human oversight for high-stakes decisions

- Transparency to customers (they must know they were assessed by AI)

Non-compliance carries fines up to 4% of global revenue. This is not optional.

EU Regulatory Landscape for AI in Finance

Understanding the regulatory stack is essential before deploying AI in financial services.

The EU AI Act (2024/1689)

Came into effect February 2025 with a phased approach:

- Immediate (Feb 2025): Prohibits certain high-risk practices (social scoring, certain biometric identification)

- 2026: High-risk AI systems in finance (credit scoring, underwriting, fraud detection) must meet transparency and oversight requirements

- 2027: General-purpose AI (LLMs) regulations finalize

- 2027-2028: Full compliance required

If your fraud detection or credit scoring model is classified as high-risk (it likely is), you must demonstrate that you've tested for bias, documented your data sources, and implemented human-in-the-loop review for borderline decisions.

GDPR & Data Privacy

Financial data is personal data. Using AI to process it requires a valid legal basis (consent, contract, legal obligation, legitimate interest). Subjects have rights to explanation, correction, and deletion.

PSD2 & Open Banking

PSD2 mandates open APIs for payment data, enabling fintech to build on bank infrastructure. This creates both opportunities (richer data for AI) and risks (more systems, more compliance surfaces).

Basel III & Operational Risk

Banking regulators require banks to manage AI as an operational risk. You must document:

- Model governance (who approves deployment, retraining, monitoring)

- Model monitoring (is the model still accurate? Has feature drift occurred?)

- Model risk (what happens if it fails)

- Audit trail (every decision must be traceable)

Implementation Challenges Specific to Financial Services

1. Data Quality & Availability

Finance has legacy systems dating back decades. Credit data, transaction history, and risk models are scattered across disparate databases. Integrating these data silos takes 6–12 months before you can train a single model.

Also, historical financial data is biased. If your bank has systematically denied credit to certain groups, training on that data will perpetuate that bias. You must clean and balance data—a non-trivial effort.

2. Model Explainability

A consumer can sue if denied credit and the decision was opaque. A bank can be fined by regulators if it can't explain why its fraud model flagged a transaction. This means you can't simply deploy a deep neural network and call it done.

Explainable AI (XAI) techniques—SHAP, LIME, feature importance—add complexity. Some institutions build two models: a high-accuracy black-box model for scoring, plus an interpretable model (decision tree, logistic regression) for explaining the decision.

3. Change Management & Risk Aversion

Banks are risk-averse by design. Regulators scrutinize every new system. Deploying AI requires executive sponsorship, board approval in some cases, and documented risk management. The implementation timeline for a high-risk AI system in a major bank: 18–24 months.

4. Vendor Lock-in

Many financial institutions default to large vendors (SAS, Oracle, IBM) for AI compliance and governance infrastructure. This reduces risk but increases cost and limits customization. Smaller institutions or startups may have more freedom but must invest in building compliance infrastructure themselves.

5. Talent Shortage

Data scientists fluent in both AI and financial regulation are rare. Hiring, or renting expertise from external partners, is expensive. Budget accordingly.

Implementation Roadmap: From Proof of Concept to Production

Phase 1: Define & Scope (Months 1–2)

Choose a high-impact, lower-risk use case first:

- Fraud detection (clear ROI, historical data available)

- Claims classification (straightforward pattern recognition)

- Not credit scoring or pricing (regulated from day one, bias risk high)

Define:

- Success metrics (fraud caught, false positives, processing time saved)

- Data sources (which systems feed the model)

- Regulatory requirements (AI Act classification, GDPR obligations)

- Compliance owner (this person must sign off before deployment)

Phase 2: Design & Build (Months 2–6)

Build a proof-of-concept (PoC) model:

- Source and clean historical data

- Train initial model(s), benchmark against baseline

- Conduct bias audit (test model performance across demographic groups)

- Document all decisions (data, method, results)

Typical effort: 2–3 data scientists, 1 compliance lead, 1 domain expert.

Phase 3: Validate & Approve (Months 6–9)

Before deployment:

- Backtest the model on held-out historical data

- Conduct explainability audit (can you explain decisions?)

- Regulatory pre-review (if applicable)

- Board/executive sign-off

This phase is heavy on governance, light on coding.

Phase 4: Pilot (Months 9–12)

Deploy to a limited set of users or transactions:

- Real-world performance often differs from backtest (data drift, concept drift)

- Establish monitoring dashboards (is the model still accurate?)

- Implement human-in-the-loop review (someone reviews AI decisions for quality)

Phase 5: Scale (Months 12+)

Gradual rollout:

- Expand to more users, more data

- Automate retraining (monthly or quarterly)

- Integrate with downstream systems (workflows, decisioning engines)

Full enterprise deployment of a regulated AI system: 18–24 months from idea to production.

Key Success Factors

- Executive sponsorship – Compliance, risk, and technology leaders must all agree

- Data governance – Know your data sources, quality, and bias

- Compliance-first design – Explainability and auditability from day one

- Monitoring & governance – The model doesn't stop needing oversight once deployed

- Vendor & talent strategy – Build vs. buy, hire vs. partner

The Future of AI in Financial Services

The next 2–3 years will see:

- Stronger regulation: The EU AI Act ramps up enforcement; UK, US, and APAC will follow

- Consolidation: Smaller fintechs acquire AI expertise or get acquired by larger players

- Generative AI: More financial institutions will use LLMs for customer service, document analysis, and research

- Real-time compliance: AI systems that continuously monitor for regulatory drift

- Decentralized finance (DeFi) & AI: Early-stage, but AI algorithms will govern DeFi protocols

For financial services organizations, the question is no longer "Should we invest in AI?" but rather "How do we do it responsibly, compliantly, and at scale?"

FAQ

Q: Is AI in financial services compliant with GDPR?

A: Yes, but only if you have a valid legal basis (contract, consent, or legitimate interest) and implement proper data protection measures (data minimization, pseudonymization, audit trails). The EU AI Act adds requirements on top of GDPR.

Q: How long does it take to implement AI fraud detection?

A: A proof-of-concept takes 2–3 months. Piloting takes 3–6 months. Full deployment with monitoring and compliance governance: 12–18 months for most banks.

Q: Can AI help with AML compliance?

A: Absolutely. AI accelerates KYC verification, transaction monitoring, and beneficial ownership identification. However, AML remains a human-oversight domain—AI identifies suspects; compliance teams investigate.

Q: What's the biggest risk of deploying AI in banking?

A: Bias leading to discrimination. A model trained on biased historical lending data will deny credit to the same groups the bank historically discriminated against. This invites lawsuits and regulatory action.

Q: Should we build AI in-house or buy a vendor solution?

A: For mission-critical systems (fraud, credit), a hybrid approach works: vendor platform for foundational governance + in-house data scientists for model training and fine-tuning. This balances risk and customization.

Q: How do we stay compliant with the EU AI Act?

A: Document everything (data, method, testing, bias audits), implement human oversight for high-stakes decisions, provide transparency to customers, and monitor the model post-deployment. Start now—enforcement ramps up in 2026.