AI for Healthcare: Use Cases, Benefits, and Implementation Guide

Healthcare is at an inflection point. Clinicians are burned out, drowning in administrative work. Imaging backlogs mean patients wait weeks for diagnoses. Drug discovery takes 10+ years and costs $2.6 billion. Healthcare systems operate on razor-thin margins while managing data from millions of patients, thousands of devices, and constant regulatory change.

AI for healthcare addresses each of these challenges at scale. AI algorithms read medical images with radiologist-level accuracy. AI triage systems redirect patients to appropriate care levels, reducing ER wait times. AI drug discovery platforms screen millions of molecular compounds in weeks. AI billing systems detect fraud and optimize revenue cycle.

Unlike other industries, healthcare AI carries stakes: Lives depend on accuracy. Bias in clinical AI can harm patients. Privacy regulations demand extreme care. Yet the transformative potential—earlier diagnoses, faster treatment, lower costs, better outcomes—makes healthcare one of AI's most important applications.

This guide covers real-world AI use cases across healthcare, implementation patterns, ROI benchmarks, and critical EU regulatory considerations (EU MDR, GDPR, and emerging AI Act rules) that every healthcare organization must address. ai-for-healthcare

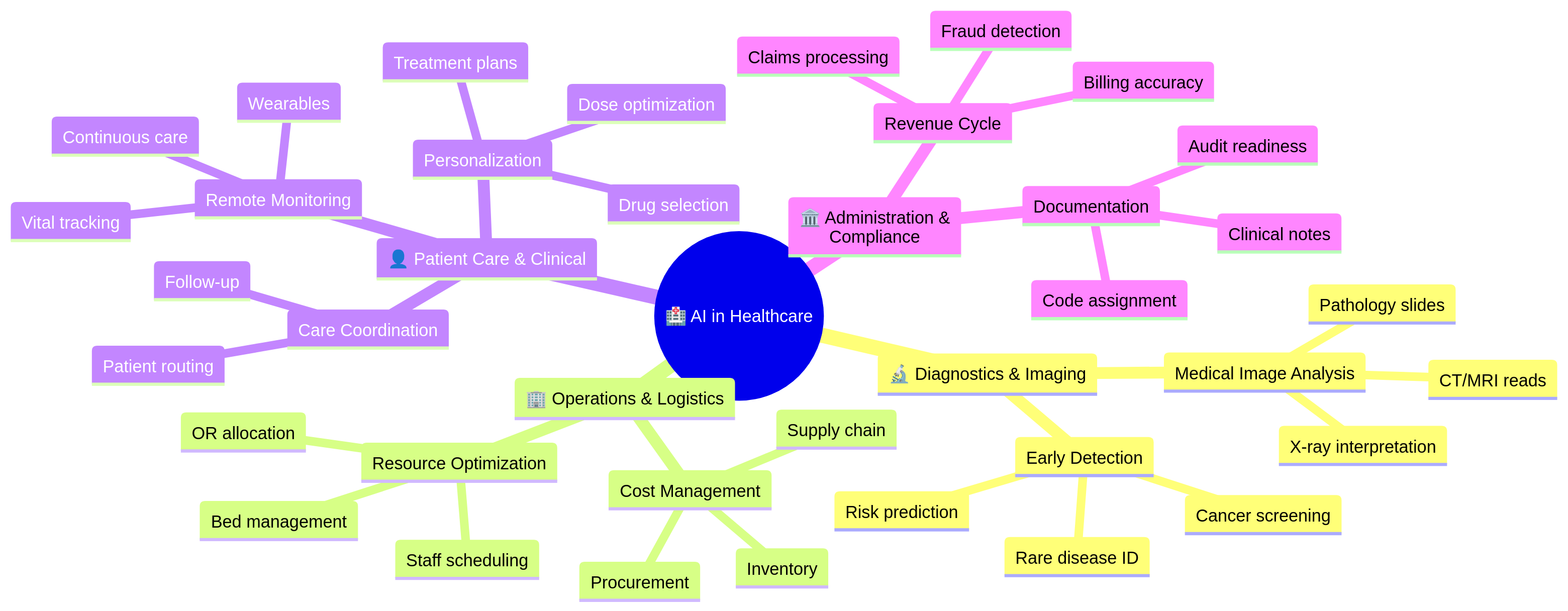

How AI Transforms Healthcare Across Domains

AI impact spans four major healthcare domains:

Each domain offers distinct ROI and implementation complexity.

1. Diagnostics and Medical Imaging: Where AI Excels

Medical imaging is AI's strongest healthcare application. Radiologists must interpret thousands of images annually—a cognitively demanding, error-prone task. AI algorithms trained on millions of images match or exceed radiologist accuracy.

Medical Image Analysis

Chest X-ray Interpretation: Detect pneumonia, tuberculosis, pneumothorax, and other pathologies from plain X-rays. Clinical performance is strong: 94–97% sensitivity (catching disease when present), 92–96% specificity (avoiding false alarms).

CT and MRI Analysis: More complex than X-rays. AI segments organs, detects lesions, measures tumor size. Applications: lung nodule detection, brain hemorrhage, liver cirrhosis staging. Performance is often better than junior radiologists, comparable to experienced ones.

Pathology and Microscopy: AI analyzes histology slides (tissue samples). Detects cancer grading, identifies tumor margins, automates slide screening. Can analyze 100x more slides than manual review; reduces pathologist workload dramatically.

Mammography and Breast Imaging: Breast cancer screening is high-volume and high-stakes. AI flags suspicious regions, assists radiologist review. Studies show AI + radiologist outperforms radiologist alone: 94% sensitivity vs 88% for radiologist-only.

Diagnostic Accuracy and Clinical Impact

When AI medical imaging systems are validated properly:

- Sensitivity (true positive rate): 92–98% depending on modality and pathology

- Specificity (true negative rate): 90–96%

- Turnaround time: 24–48 hours vs. 5–10 days for overbooked radiologists

- Throughput: One AI system can read 10–20x more images than one radiologist

Real-world impact: A mid-size hospital with 200,000 imaging studies/year, 30-day backlogs, and radiologist shortage deploys AI triage. System flags high-urgency findings (stroke, hemorrhage) for immediate radiologist review. Routine studies are batched for efficient interpretation. Result: backlogs drop to 5 days, urgent cases get same-day read.

Implementation Challenges

Regulatory Approval: In EU, medical AI imaging is classified as Class II or III device (depending on risk). Requires CE marking under MDR (Medical Device Regulation). Clinical validation studies are mandatory. Process takes 6–12 months.

Data Privacy: Training AI on medical images requires thousands of annotated images. GDPR requires explicit consent for image use, proper de-identification, and secure data handling.

Clinical Integration: AI doesn't replace radiologists; it augments them. Workflow must ensure AI recommendations are reviewed, not blindly accepted. Liability and accountability must be clear.

Generalization Risk: An AI trained on CT scanners from Siemens may not work on GE or Philips scanners. Training on images from one hospital may not transfer to another with different patient populations. Continuous validation is required.

2. Operational AI: Efficiency and Cost Management

Beyond diagnostics, AI optimizes healthcare operations—the unglamorous but high-ROI domain.

Staff Scheduling and Resource Optimization

Hospitals must balance staff levels against patient volume, acuity, and regulations (minimum nurse-to-patient ratios). Manual scheduling is slow, error-prone, and often violates union agreements.

AI scheduling systems forecast patient volume by hour, predict admission/discharge, recommend staffing. Results:

- 10–15% reduction in overtime

- 5–8% improvement in nurse satisfaction (fewer last-minute changes)

- 3–5% improvement in patient satisfaction (fewer long wait times)

Operating Room (OR) Allocation

Surgical schedules are complex: surgeon availability, patient cases, room preparation, anesthesia teams. Manual scheduling leaves ORs dark (unused) 20–30% of the time.

AI OR scheduling predicts case duration, sequences surgeries to minimize gaps, pre-stages equipment. Results:

- 15–20% increase in OR utilization

- 10% reduction in patient wait times for surgery

- $500K–$2M annual savings for a 10-OR facility

Bed Management

Hospital bed occupancy is binary: bed is empty (waste) or full (overcrowding). AI predicts patient discharge timing, forecasts admissions, optimizes bed assignments.

Results:

- 8–12% reduction in readmissions (patients discharged prematurely return quickly)

- 10–15% reduction in bed shortages

- Better ICU/step-down placement (high-risk patients to monitored beds)

3. Clinical and Patient Care: Personalization and Continuous Monitoring

Remote Patient Monitoring (RPM)

Chronic disease patients (diabetes, heart failure, COPD) benefit from continuous monitoring. Historically, monitoring required in-person visits. AI + wearables enable remote monitoring.

Diabetes Management: Continuous glucose monitors (CGMs) feed glucose data to AI systems. AI predicts hypoglycemic events 15–20 minutes ahead, alerts patient to take action. Reduces severe hypoglycemia by 30%.

Heart Failure: Wearables track weight, activity, resting heart rate. AI detects deterioration patterns (sudden weight gain + elevated heart rate = decompensation) and alerts clinicians. Reduces hospitalizations by 20–25%.

Post-Surgical Monitoring: Surgical patients wear patches that transmit vitals. AI detects infection, sepsis, or bleeding complications early. Reduces post-op complications by 15%.

Personalized Treatment Planning

One-size-fits-all medicine is obsolete. AI matches patient characteristics (genetics, comorbidities, biomarkers) to treatment options, predicting response likelihood.

Cancer Treatment: AI analyzes tumor genetics, matches to therapies. Patients with specific mutations get targeted drugs vs. broad chemotherapy. Improves response rates (70% vs 40%), reduces side effects.

Diabetes Management: Baseline HbA1c, comorbidities, prior medication responses feed AI recommendation engine. Suggests diabetes drug (metformin, GLP-1, insulin) likely to achieve glycemic control with fewest side effects. Improves treatment adherence by 20%.

Medication Optimization: Elderly patients on 8+ medications face drug-drug interactions. AI checks compatibility, suggests dosage adjustments, predicts adverse events. Reduces ER visits by 10–15%.

4. Administrative AI: Revenue Cycle and Compliance

Clinical Documentation and Coding

Physicians document patient encounters in unstructured notes. Coders then extract diagnoses, procedures, and assign billing codes (ICD-10, CPT). This is labor-intensive and error-prone.

AI clinical documentation tools:

- Scribe AI: Listens to doctor-patient conversation, transcribes visit, flags missing elements (allergy update, medication reconciliation).

- Coding AI: Extracts diagnoses/procedures from notes, suggests billing codes, checks compliance with insurance requirements.

Results:

- 20–30% reduction in coding time

- 5–10% improvement in coding accuracy

- 8–15% increase in captured billing (missed codes identified)

Insurance Claims and Prior Authorization

Physicians spend 15+ hours weekly on prior authorization (PA)—seeking insurance approval for treatments.

AI PA systems:

- Parse treatment plan, extract diagnoses, medications

- Check insurance formulary and coverage rules

- Submit PA request automatically with clinical justification

- Track approval status, escalate denials

Results:

- 50–60% reduction in PA time

- 10–15% faster approvals

- 5–8% reduction in denials on first submission

Fraud Detection

Healthcare fraud costs $100B+ annually in the US alone. Billing fraud, phantom billing, unnecessary tests, and kickbacks are common.

AI fraud detection:

- Flags billing patterns (e.g., "Cardiologist A always orders echo; Cardiologist B never does")

- Identifies billing spikes relative to patient population

- Detects overbilling vs. reference baseline

Results:

- 2–5% reduction in fraud detection cost (vs. manual audits)

- $500K–$5M annual recovery for large health systems

Real-World ROI: The Numbers

Here's financial impact from a mid-sized regional health system (300 beds, 4 hospitals, $1B annual revenue) implementing AI across domains:

| Use Case | Investment | Annual Benefit | Payback |

|---|---|---|---|

| Imaging AI (CT/X-ray) | $300K | $2M (radiologist productivity) | 2 months |

| OR Scheduling | $150K | $1.5M (utilization gain) | 1 month |

| Remote Monitoring | $500K | $3M (avoided readmissions) | 2 months |

| Documentation/Coding | $200K | $2M (captured revenue) | 1 month |

| Fraud Detection | $250K | $1.5M (fraud recovery) | 2 months |

| Total | $1.4M | $10M | 1.7 months |

Not all health systems see equal ROI. Early adopters, well-data organizations, and those with acute pain points (imaging backlogs, staffing crises) see faster payback.

Regulatory Considerations: EU MDR, GDPR, and AI Act

Healthcare AI in the EU faces a complex regulatory landscape:

EU Medical Device Regulation (MDR)

AI systems that assist in diagnosis or treatment are classified as medical devices and require regulatory approval.

Classification:

- Class I (low risk): General wellness apps, basic fitness trackers. Minimal approval required.

- Class II (moderate risk): Diagnostic AI (imaging analysis, lab test interpretation). Requires CE mark, clinical evidence, quality system.

- Class III (high risk): Life-sustaining or life-critical AI (surgical guidance, critical care decision support). Requires Notified Body review.

CE Marking Process:

- Clinical validation: 100–500 patient study demonstrating safety/effectiveness

- Quality management system: ISO 13485 certification

- Documentation: Design history file, risk assessment, traceability

- Notified Body review (Class II/III): Third-party assessment

- Timeline: 6–12 months for Class II; 12–24 months for Class III

Key requirement: Hospitals can't just use any AI model. Medical device AI must have CE mark. Using non-compliant AI exposes hospitals to liability and regulatory penalties.

GDPR and Patient Privacy

Medical data is special category personal data (Article 9, GDPR). Explicit consent is required for processing.

Requirements:

- Explicit patient consent for using their data in AI training/validation

- Data minimization: Use only necessary data

- Purpose limitation: Can't use diagnostic data for non-diagnostic research without new consent

- Data subject rights: Patients can request deletion or data portability

- Processor agreements: AI vendors must be DPA-signed Data Processors

Best practice: Anonymize training data where possible. GDPR allows processing of truly anonymized data without consent. But anonymization is hard in healthcare; re-identification is often possible with genetic/medical data.

EU AI Act (Emerging)

The EU AI Act classifies AI into risk tiers:

- Prohibited: Manipulating human behavior, discriminatory systems

- High-Risk: Medical diagnosis/prognosis, surgery. Requires human oversight, documentation, bias monitoring

- Limited-Risk: Chatbots, certain surveillance. Transparency required

- Low-Risk: Most applications. Minimal requirements

For healthcare AI, assuming high-risk classification, requirements include:

- Human-in-the-loop review (AI recommendations reviewed by physician)

- Explainability (ability to explain predictions)

- Bias monitoring and mitigation

- Post-market surveillance (monitoring real-world performance)

- Documentation and audit trails

Timeline: EU AI Act enforcement begins late 2025/early 2026 for high-risk applications. Compliance will be mandatory by 2027–2028.

Implementation Roadmap for Health Organizations

Phase 1: Assessment (Months 1–2)

- Identify pain points: Where does AI create most value? (Imaging backlogs, staffing shortages, billing fraud?)

- Data audit: What data do you have? Is it clean, accessible, and compliant?

- Regulatory assessment: Will AI be a medical device? What approval path?

- Team alignment: Who owns AI decision-making? Clinical, IT, compliance?

Phase 2: Pilot (Months 3–6)

- Select use case: Start with one high-impact, lower-complexity domain (e.g., X-ray triage, OR scheduling).

- Proof of concept: Deploy with 100–200 cases or one OR schedule.

- Clinical validation: Measure accuracy, safety, workflow impact.

- Regulatory pathway: Start CE mark process if medical device.

Phase 3: Production (Months 7–12)

- Scale to full deployment: Roll out across all relevant sites.

- Workflow integration: Embed AI into clinical/operational workflows.

- Compliance: Complete MDR/GDPR requirements.

- Training: Educate staff on AI use, interpretation, limitations.

Phase 4: Continuous Improvement (Year 2+)

- Monitor performance: Track accuracy, clinical outcomes, user satisfaction.

- Retrain models: Retrain on new data every 6–12 months.

- Expand use cases: Deploy AI to adjacent domains based on Phase 1 priorities.

- Governance: Establish AI governance board, review ethics/bias quarterly.

Pitfalls to Avoid

Pitfall 1: Deploying AI Without Clinical Validation Building an ML model on historical data and deploying to live patients without prospective validation is dangerous. Model accuracy in retrospective analysis doesn't guarantee real-world safety.

Fix: Conduct clinical validation studies. Start with observational study (AI recommends, clinician confirms), then randomized trial if evidence warrants.

Pitfall 2: Ignoring Data Quality AI is only as good as training data. Biased, incomplete, or mislabeled training data produces biased models.

Fix: Data audit first. Clean and label data rigorously. Identify and correct imbalances (e.g., if training data is 90% male, AI will underperform for females).

Pitfall 3: Black Box Without Explainability Clinicians won't trust AI they can't interpret. "The algorithm recommends surgery" without explaining why creates liability and resistance.

Fix: Prioritize interpretable models or use explainability tools (SHAP). Document reasoning: "High risk based on [feature 1], [feature 2]."

Pitfall 4: Deploying Without Governance Who owns the AI model? Who monitors performance? What's the escalation process if AI fails? Unclear ownership causes problems.

Fix: Establish AI governance board with clinical, IT, compliance, and legal representation. Document decision processes. Review quarterly.

Pitfall 5: Neglecting Change Management Clinicians trained for 20 years are skeptical of AI. Forcing adoption without education causes resistance, workarounds, and failure.

Fix: Involve clinicians early. Show evidence. Train comprehensively. Collect feedback and iterate. Build trust.

Frequently Asked Questions

Q: Is AI in healthcare really safe? A: When properly validated, designed, and deployed, yes. Validation is critical. Some AI systems have better safety records than clinicians. But deploying unvalidated AI is dangerous. Clinical validation is non-negotiable.

Q: Will AI replace radiologists? A: No. AI augments radiologists, handling high-volume routine reads, allowing radiologists to focus on complex cases and patient interaction. The radiologist role shifts to senior reviewer and decision-maker.

Q: How long does regulatory approval take? A: 6–12 months for Class II medical device AI (typical diagnostic use case). 12–24 months for Class III. The bottleneck is clinical validation, not paperwork.

Q: Can we use commercial AI models (like GPT) for healthcare? A: With caution. Commercial models have privacy risks (training data could include patient info). They lack clinical validation and medical device approval. Limited use for non-critical tasks (admin, answering FAQ). For clinical decisions, use validated, regulated medical AI.

Q: How do we ensure AI is unbiased? A: No AI is perfectly unbiased. But you can minimize bias: (1) audit training data for representation; (2) measure performance across demographic groups; (3) monitor real-world performance for bias drift; (4) retrain if bias detected. Document all steps.

Q: Is AI expensive to implement? A: Upfront costs are $100K–$500K per use case (development, validation, approval). But payback is fast: 1–3 months for high-impact applications. Long-term, AI is cheaper than hiring.

Q: What about GDPR when training AI on patient data? A: Requires explicit patient consent. Anonymization is ideal but hard in healthcare. Use a DPA with AI vendors. Limit data retention. Give patients right to deletion.

Start Your Healthcare AI Journey

AI is transforming healthcare—improving diagnoses, saving costs, reducing clinician burden, and ultimately improving patient outcomes. But success requires clinical rigor, regulatory compliance, and organizational commitment.

If your healthcare organization is ready to move beyond pilots and deploy AI at scale—whether diagnostic imaging, operational optimization, or patient care—the time to start is now.

Digital Colliers specializes in healthcare AI implementation, from regulatory strategy through production deployment. We have deep expertise in EU MDR, GDPR, and AI Act compliance. We've helped hospitals validate, approve, and scale AI across imaging, operations, and clinical workflows.

Contact us to discuss your healthcare AI strategy, data readiness, and regulatory pathway. Let's explore where AI creates the most impact in your organization.

Schedule a discovery call today.