AI Proof of Concept: How to Validate AI Ideas Before Full Investment

Most AI projects fail not because the technology doesn't work, but because teams build the wrong solution and discover it too late. By then, they've spent €150K, burned six months, and alienated the internal stakeholders who were counting on them.

There's a better way: the AI Proof of Concept (PoC).

An AI PoC is a small, controlled experiment—typically 4–6 weeks, €20K–€40K, with a 2–4 person team—that answers one critical question: does this AI idea actually solve our problem? Before you commit to a full custom development project, you de-risk the idea. You test it with real data. You measure it against real success criteria. You decide: build, pivot, or kill.

This guide is for leadership and decision-makers at European B2B companies who are considering an AI initiative but want to avoid expensive failures. If you're asking "Should we invest in AI?" or "Will this actually work for us?"—this is your roadmap.

What Is an AI Proof of Concept?

An AI PoC is not a pilot. A pilot tests a solution you've already built. A PoC answers whether a solution is worth building.

An AI PoC typically includes:

- A clearly defined business problem and success metrics

- A data audit (do we have the data we need?)

- A feasibility assessment (is this technically doable?)

- A minimum viable prototype (can we build a working model in 4–6 weeks?)

- Go/no-go decision with clear criteria

- A roadmap for what's next (if the answer is yes)

The PoC answers five core questions before you commit big budget:

- Is the business problem real and measurable?

- Do we have the data to solve it?

- Is the technical approach realistic?

- Can we build a working prototype in 4–6 weeks?

- Does the prototype actually improve on our current approach?

If you answer "yes" to all five, you move to full development. If any answer is "no," you pivot or kill the idea before wasting serious money.

Learn more about the broader ai-consulting strategy and approach before diving into a PoC.

The AI PoC Process: Phase by Phase

Here's the four-phase process we use with our clients at Digital Colliers:

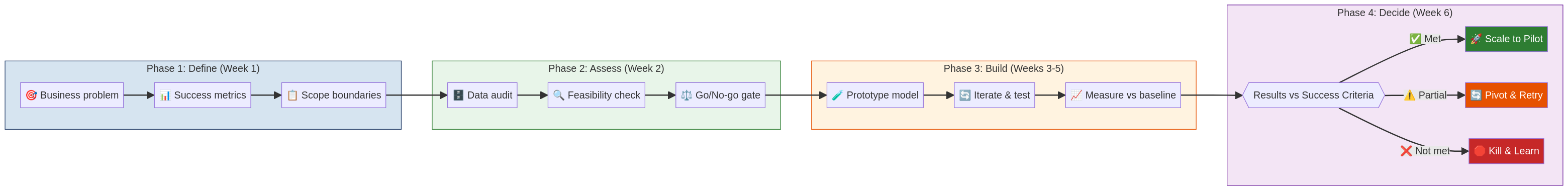

The 4-phase PoC framework: Define your problem and metrics, Assess feasibility and data quality, Build and test a prototype, then Decide whether to scale, pivot, or kill the idea.

Phase 1: Define (Week 1)

The first week is about alignment, not coding. Your team, stakeholders, and the PoC partner agree on three things:

- The Business Problem — What is the actual pain point? Not "we want AI," but "we're manually processing 500 invoices per month, which costs €40K per year and creates a 5-day backlog." Be specific.

- Success Metrics — How will you know the PoC worked? Examples: "Process 80%+ of invoices automatically," "Reduce classification errors from 8% to <2%," "Complete a forecast 3 days earlier than the current process." Metrics should be measurable and tied to business value.

- Scope Boundaries — What's in and out? Example: "We're testing document classification only, not end-to-end workflow automation. We're using invoices from the last 2 years, not real-time data." Clear boundaries prevent scope creep.

This phase is short but critical. Misaligned PoCs die. Well-defined ones move forward.

Phase 2: Assess (Week 2)

Once you've defined the problem, you assess whether a solution is feasible.

- Data Audit — What data do you actually have? Is it structured or unstructured? How much of it is labeled (tagged with correct answers)? Is it fragmented across systems? A good audit takes 2–3 days and answers: "Do we have enough data to train an AI model?"

- Feasibility Check — Is this technically doable? A logistic regression model is feasible in weeks. A deep learning system that understands context might need months. Your partner estimates timeline and effort.

- Go/No-Go Gate — Based on the data audit and feasibility check, do you proceed? This is the moment to kill a bad idea before investing further.

Most PoCs pass the assessment phase because teams only propose ideas that are plausible. But occasionally, the data is too messy, or the scope is too broad, or the problem is better solved with a simple database query than machine learning. Killing a PoC at this gate saves months and money.

Phase 3: Build (Weeks 3–5)

If you pass the gate, you build. This phase moves quickly because scope is tight.

- Develop Prototype — Your team builds a working model or system. It doesn't need to be production-ready. It needs to demonstrate the concept.

- Test with Real Data — The prototype is tested against your actual data, not toy data. Does it work on your invoices? Your customer records? Your manufacturing images?

- Measure vs Baseline — How does the AI perform compared to what you're doing now? If you're currently processing invoices manually with 8% error rate, does the AI get it to 5%? 2%? If it can't beat your baseline, the PoC fails and you pivot.

Building typically takes 2–3 weeks. Testing and iteration takes another 1–2 weeks.

Phase 4: Decide (Week 6)

You have a working prototype. Now you decide:

- Results vs Success Criteria — Does the prototype meet the metrics you defined in Week 1? If success was "80% automation," and you achieved 78%, do you call that a win? This is a judgment call, but it should align with your predefined criteria.

- Scale to Pilot — If the PoC works, you expand it. A pilot is a larger, longer test with more data and more users. Timeline: 2–3 months. Cost: €50K–€150K. If the pilot works, you move to production.

- Pivot & Retry — Maybe the approach didn't work, but you learned something. You adjust and run another iteration. Example: "Classification didn't reach 80%, but we learned our invoice types are more complex than expected. Let's try a different model architecture." A pivot micro-iteration takes 2–3 weeks.

- Kill & Learn — If the PoC proved the idea isn't viable, you kill it. You've learned something valuable without a massive investment. Kill is not failure; it's intelligent risk management.

PoC vs Pilot vs MVP: What's the Difference?

Teams often confuse these terms. Here's the distinction:

AI Proof of Concept — 4–6 weeks | €20K–€40K | Tests the idea Does the concept work on our data? Is the approach feasible? Should we invest in full development?

AI Pilot — 8–12 weeks | €50K–€150K | Tests the scaled solution We've proven the concept. Now we run it with larger data, more users, and real business processes. Does it work at scale?

Minimum Viable Product (MVP) — 12–20 weeks | €100K–€300K | Production-ready solution The system is live in production, supporting actual business users, with monitoring, security, and support.

Timeline: PoC → Pilot → MVP. Cost increases as risk decreases.

Many companies skip the PoC and jump straight to a pilot or MVP. This is expensive and risky. A €30K PoC that kills a bad idea before the €200K development phase is the best money you'll spend.

PoC Cost Breakdown

Team

- Project manager (part-time, 0.25 FTE): €3K–€6K

- Data scientist or ML engineer (0.75 FTE): €9K–€15K

- Software engineer (0.5 FTE): €5K–€10K

- Your internal stakeholder (20% of their time): Built into overhead

Infrastructure & Tools

- Cloud compute (AWS, GCP, Azure): €1K–€3K

- Licenses and software: €500–€1.5K

- Data access and preparation: €1K–€2K

Total Typical PoC: €20K–€40K

This is labor-heavy, not capex-heavy. You're buying expertise to answer a question quickly, not building infrastructure.

Common PoC Mistakes and How to Avoid Them

Mistake 1: Starting Without Clear Success Metrics A PoC without predefined success criteria is a fishing expedition. You'll build something, it will sort of work, and then you'll argue about whether it's successful. Define metrics before you code.

Mistake 2: Using Toy Data Instead of Real Data The prototype works beautifully on sanitized data. Then you feed it real data: inconsistent formats, missing values, edge cases. It breaks. Always test on real data from week one.

Mistake 3: Not Involving Key Stakeholders The PoC team should include the person who owns the problem (the operations manager, the CFO, the customer success lead). They need to be involved in scoping and decision-making. If they're surprised at the end, the PoC fails.

Mistake 4: Underestimating Data Preparation Teams think the "AI" part is the long pole. Usually, it's data. Sourcing it, cleaning it, labeling it, preparing it for training. Budget 30–40% of PoC time for data work.

Mistake 5: Treating PoC as a Prototype for Production A PoC is a proof. It proves the idea works. But PoC code is often dirty, shortcuts are taken, edge cases are ignored. If the PoC succeeds, you rebuild it properly for production.

Mistake 6: Hiding Bad Results If the PoC doesn't meet success criteria, don't massage the data or redefine success. Accept the result. You've learned something. Pivot or kill, but be honest.

When a PoC Is the Wrong Approach

AI PoCs aren't always necessary. Sometimes you know you need AI because the problem is clear and the solution is proven.

Skip the PoC if:

- You're solving a standard use case (invoice classification, lead scoring, churn prediction) and you have clean, labeled data

- You're integrating a third-party AI API (e.g., document intelligence, translation, image analysis) where the vendor has already done the PoC

- Your problem is well-researched and similar solutions exist in your industry

- You have experienced in-house AI expertise and confidence in your approach

Run a PoC if:

- You have a novel problem unique to your business

- Your data is messy, fragmented, or unlabeled

- You're unsure about the technical approach

- Stakeholders are skeptical and need proof before committing budget

- The investment is large (> €200K) and you want to de-risk it

Most enterprise companies benefit from a PoC. The cost is small relative to the risk avoided.

PoC to Pilot: The Transition

If your PoC succeeds, here's how you transition to a pilot:

Week 1 (PoC End) — Results review and stakeholder alignment. Do we scale?

Weeks 2–3 — Scope the pilot. What changes from PoC to pilot? More data? More users? More integrations? A pilot is bigger, longer, and more realistic than a PoC.

Weeks 4–5 — Plan infrastructure, security, monitoring. A PoC can run on a laptop. A pilot needs real cloud infrastructure, error handling, and audit logs.

Weeks 6+ (Pilot Phase) — Build, test, measure. The team is larger. Involvement is deeper. Success criteria are more stringent.

Typically, a successful PoC transitions to a pilot within 1–2 weeks. There's momentum. Stakeholders are convinced. Budget is approved.

Real-World PoC Example: Invoice Processing

Here's how a PoC played out for a mid-market European logistics company:

Week 1: Define

- Problem: 600 invoices per month, manually classified into 12 categories (type, vendor, cost center). Errors cause accounting delays and disputes.

- Success Metrics: 85%+ accuracy on classification, <2% manual override rate

- Scope: Historical invoices only, no real-time integration yet

Week 2: Assess

- Data: 8,000 historical invoices, labeled (good). Formats varied (PDF, email attachments, scanned images). Data was fragmented in three systems.

- Feasibility: "We can build a hybrid vision + NLP model. OCR for scanned docs, text extraction for digital files. Timeline: 3 weeks. Confidence: high."

- Gate: Go.

Weeks 3–5: Build

- Week 3: Set up OCR pipeline, test on 500 scanned invoices. Accuracy: 78%.

- Week 4: Build classification model. Tested on 2,000 invoices. Accuracy: 91% on digital files, 73% on scanned (OCR errors cascaded).

- Week 5: Fine-tune OCR, re-test scanned invoices. Final accuracy: 87% across all formats.

Week 6: Decide

- Results: 87% accuracy vs 85% target. Achieved. 91% digital, 73% scanned (bias toward digital documents).

- Decision: Scale to pilot. Plan: integrate with accounting system, test with 1,000 invoices over 8 weeks, add human-in-the-loop review for low-confidence predictions.

Outcome: Pilot launched. After 8 weeks, 89% accuracy in production. ROI: €120K annual savings (reduced manual processing). Project cost: €30K PoC + €120K pilot + €200K production implementation = €350K over 6 months.

Without the PoC, the company would have either skipped AI (leaving €120K annual savings on the table) or committed €350K upfront with no proof it would work. The PoC proved the idea, de-risked the investment, and gave stakeholders confidence.

Digital Colliers: Running Your First PoC

At Digital Colliers, we've run dozens of AI PoCs for European companies. We follow a strict process: define before you build, assess before you commit, measure before you scale.

We take ownership of speed and clarity. Your PoC doesn't stall. You get weekly check-ins, clear go/no-go decisions, and a written report with next steps.

If the PoC succeeds, we often transition to the pilot and production phases. You maintain ownership of the code and the model; we execute the roadmap.

ai-consulting and implementation services to learn more about our approach.

FAQ

Q: How is a PoC different from a pilot? A: A PoC (4–6 weeks, €20K–€40K) proves the idea works on your data. A pilot (8–12 weeks, €50K–€150K) scales the proven concept to realistic conditions. A PoC is a question. A pilot is a larger test.

Q: What if the PoC fails? A: That's valuable learning. You've discovered the idea doesn't work (or needs a different approach) before spending 5x the budget. Failure at the PoC stage is actually success: smart risk management.

Q: How much data do we need for a PoC? A: Depends on the problem. For a classification model, 500–1,000 labeled examples are a good start. For a predictive model, 1,000–5,000. For a complex system, 5,000+. Your PoC partner can advise after a data audit.

Q: Can we run a PoC with dirty, unlabeled data? A: Yes, but it's harder. You'll spend PoC time cleaning and labeling data instead of building the model. This is why the data audit (Phase 2) is critical. It reveals whether data prep will be the bottleneck.

Q: Can a PoC move to production directly? A: Rarely. A PoC proves the concept, but it's not production-ready. It lacks error handling, security, monitoring, scalability. Plan a pilot or a "hardening" phase before production.

Q: Who should be in the PoC team? A: Typically 2–4 people: a project manager (yours or the partner's), a data scientist or ML engineer, a software engineer, and your internal stakeholder (the person who owns the problem). Keep it small and focused.

Q: How do we handle data privacy in a PoC? A: Use anonymized or synthetic data where possible. If you need real data, ensure proper data governance, GDPR compliance, and encryption. A good PoC partner knows data privacy protocols.

Q: What if we already committed to a full development project? Can we still run a PoC? A: You can run a "pre-development PoC" to confirm the approach before you spend €200K on full development. It's not ideal, but better late than never.