AI Readiness Assessment: A Complete Guide for Business Leaders

Your executives are asking: "Are we ready for AI?" But readiness isn't a binary yes-or-no answer. It's a multidimensional challenge spanning data quality, team capability, infrastructure, and organizational culture.

Without a structured assessment, most companies make one of two mistakes: they either rush AI initiatives they're not prepared for, or they delay competitive advantages through excessive caution. Digital Colliers' AI readiness assessment framework gives you the clarity to chart the right course.

This guide walks you through a practical, actionable AI readiness assessment—one you can conduct internally or with expert guidance. We'll show you the four critical dimensions, how to score your organization, and what to prioritize first.

For a deeper dive into transforming your business through AI, explore our AI consulting services to understand how we partner with European B2B leaders on AI strategy and implementation.

What Is AI Readiness?

AI readiness measures your organization's ability to successfully adopt, deploy, and scale artificial intelligence systems that deliver measurable business value. It's not about having the best algorithms or the latest GPUs. It's about having the right data, people, systems, and mindset in place.

High AI readiness companies share these characteristics:

- Access to quality data that's clean, labeled, and easily accessible

- Teams with both technical and domain expertise who can bridge business and AI

- Infrastructure that can handle model training, serving, and iteration

- A culture that embraces experimentation and accepts measured risk

Many organizations have some of these strengths but are weak in others. An assessment reveals exactly where the gaps are—and where to invest first for maximum impact.

The Four Dimensions of AI Readiness

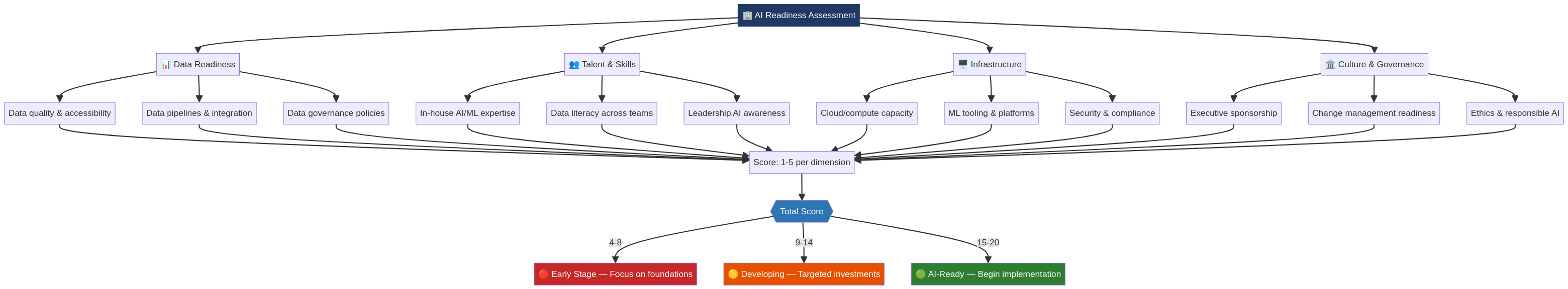

The AI Readiness Assessment Framework evaluates four core dimensions with 12 sub-criteria. Each criterion is scored 1-5, with totals ranging from 4 (Early Stage) to 20 (AI-Ready).

Dimension 1: Data Readiness

Data is the foundation of every AI system. Without quality data, even the best models produce unreliable outputs. Data readiness has three sub-criteria:

Data Quality & Governance

Start with this question: Do you know where your data lives, who owns it, and whether it's fit for AI use?

Organizations with strong data quality and governance have:

- A single source of truth (master data management)

- Clear ownership and stewardship policies

- Documentation of data lineage and definitions

- Regular audits for accuracy and completeness

- Compliance controls aligned with GDPR and other regulations

Weak data governance is one of the top reasons AI projects fail. If your data is scattered, duplicated, or poorly documented, you'll spend months in data preparation before you can even train a model.

Scoring Guide:

- 1: No data governance; data is siloed across departments

- 2: Basic data governance; some documentation exists

- 3: Moderate governance; most critical data is managed

- 4: Strong governance; clear ownership and processes

- 5: Excellent governance; continuous monitoring and improvement

Data Volume & Accessibility

The volume of data you have matters, but accessibility matters more. Can your teams actually access the data they need without weeks of manual extraction?

Consider these indicators:

- Do you have 12+ months of historical transactional data?

- Are multiple data sources accessible via a central platform?

- Can authorized users query data in hours, not days?

- Is real-time data available for time-sensitive decisions?

Many enterprises have vast data repositories but can't access them efficiently. A well-designed data lake or warehouse dramatically accelerates AI development.

Scoring Guide:

- 1: Limited historical data; highly fragmented

- 2: 6-12 months of data; significant access barriers

- 3: 12-24 months; moderate barriers to access

- 4: 2+ years; good accessibility and tools

- 5: Rich historical and real-time data; easy access

Data Integration & Pipelines

Real-world AI systems need continuous data flows. Integration and pipeline maturity determines how quickly you can operationalize models.

Strong pipeline capabilities include:

- Automated ETL (extract, transform, load) processes

- Real-time or near-real-time data movement

- Data quality checks and alerting

- Scalable infrastructure to handle volume growth

- Version control for datasets (important but often overlooked)

Without mature pipelines, you'll be manually preparing data for every model, which destroys velocity.

Scoring Guide:

- 1: Manual data preparation; no automation

- 2: Basic scheduled ETL; limited error handling

- 3: Reliable ETL; some automation; manual oversight

- 4: Automated with monitoring; minimal manual work

- 5: Fully automated, resilient pipelines with versioning

Dimension 2: Talent Readiness

Data is worthless without people who can extract insight from it. Talent readiness isn't just about hiring a few machine learning engineers—it's about building a culture where different skill sets align around AI.

AI Skills & Expertise

You need three types of AI talent working together:

ML Engineers & Data Scientists: These roles require deep technical skills (Python, TensorFlow, model optimization). They're scarce and expensive, especially in Central Europe.

Data Engineers: These specialists build and maintain the pipelines, warehouses, and infrastructure that enable ML. They're critical but often overlooked.

Domain Experts: Product managers, business analysts, and subject-matter experts who understand the business problem and can guide the technical team toward solutions that actually matter.

The best organizations don't hire for one skill type and hope it covers everything. They build cross-functional teams where these perspectives collide productively.

Scoring Guide:

- 1: No AI/ML capability; reliance on external consultants

- 2: 1-2 people with basic skills; no depth

- 3: Small dedicated team; some gaps in specialized roles

- 4: Established team across ML, data engineering, and domain expertise

- 5: Mature team; strong depth in multiple areas; internal mentoring

Change Management Capability

Introducing AI into an organization disrupts existing workflows, decision-making processes, and sometimes entire business models. Organizations with poor change management skills fail to gain adoption even when AI systems work perfectly.

Assess your organization's ability to:

- Communicate the vision and rationale for AI initiatives

- Train employees on new tools and processes

- Manage resistance and skepticism constructively

- Measure and celebrate early wins

- Adapt processes based on feedback

This is as much a soft skill as a hard skill. It's about leadership, communication, and psychological safety.

Scoring Guide:

- 1: No structured change management; top-down directives

- 2: Limited change management; some communication

- 3: Moderate capabilities; ad-hoc training

- 4: Structured change programs; clear communication

- 5: Mature program; continuous improvement; employee engagement

Leadership AI Knowledge

Your leadership team doesn't need to code. But they do need enough AI literacy to ask good questions, make informed decisions, and allocate resources wisely.

This includes understanding:

- What AI can and cannot do

- The relationship between data quality and model performance

- Why time to value matters more than perfection

- The long-term investment required for AI transformation

Leaders without this knowledge tend to either oversell AI ("it will fix everything") or dismiss it ("it's just hype").

Scoring Guide:

- 1: Leadership unfamiliar with AI; skeptical

- 2: Awareness exists; limited hands-on knowledge

- 3: Leadership understands basics; some strategic alignment

- 4: Strong AI literacy; active in strategy and decisions

- 5: Deep understanding; can articulate AI vision and priorities

Dimension 3: Infrastructure Readiness

AI systems are computationally intensive. Infrastructure readiness determines whether your organization can actually run models at scale without collapsing under the load.

Computing Infrastructure

Do you have the computational power to train and serve models at reasonable cost and speed?

Key considerations:

- On-premises hardware: High initial cost; greater control; vendor lock-in risks

- Cloud platforms (AWS, Azure, GCP): Lower upfront cost; scale on demand; vendor dependency

- Specialized hardware: GPUs or TPUs dramatically accelerate AI workloads but add complexity

Most European B2B organizations should start with cloud-based solutions. They offer the flexibility to experiment without massive capital investment.

Scoring Guide:

- 1: No cloud or GPU access; traditional IT infrastructure

- 2: Cloud account exists; limited understanding of AI requirements

- 3: Basic cloud setup; can run small experiments

- 4: Well-configured cloud; optimized for ML workloads

- 5: Enterprise-grade infrastructure; cost optimized; scaling built-in

ML Ops & Tooling

ML Ops (machine learning operations) covers the tools and processes for model development, testing, deployment, and monitoring. This is where many organizations struggle—they can train a model in a laptop but can't move it to production.

Essential ML Ops capabilities:

- Model versioning: Track which model is in production, what changed, why

- Experiment tracking: Log hyperparameters, metrics, and results

- CI/CD pipelines: Automated testing before deployment

- Model monitoring: Detect performance degradation in production

- Retraining automation: Keep models fresh as data changes

Without these, you're flying blind. A model that worked perfectly during training can silently fail in production.

Scoring Guide:

- 1: Ad-hoc model management; no versioning or monitoring

- 2: Basic experiment tracking; manual deployment

- 3: Some tooling in place; inconsistent practices

- 4: Integrated tooling; documented processes; monitoring

- 5: Mature ML Ops; fully automated pipelines; comprehensive monitoring

Security & Compliance

AI systems touch sensitive data and can create compliance risks if not handled carefully.

Critical areas:

- Data security: Are model inputs encrypted? Is training data isolated?

- Model security: Can adversaries fool your model with crafted inputs?

- Audit trails: Can you explain how a model made a decision (important for GDPR and industry regulations)?

- Access controls: Who can deploy models? Who can retrain them?

European organizations especially need to consider GDPR compliance, particularly around data minimization and the right to explanation.

Scoring Guide:

- 1: Minimal security controls; compliance gaps

- 2: Basic controls; reactive compliance

- 3: Moderate controls; compliance awareness

- 4: Strong controls; proactive compliance; audit-ready

- 5: Enterprise security; continuous compliance; security reviews built in

Dimension 4: Culture Readiness

The best data, teams, and infrastructure mean nothing if your organization's culture doesn't embrace AI.

AI Adoption Mindset

Does your organization view AI as an opportunity or a threat?

Organizations with strong AI adoption mindsets:

- Recognize AI as a competitive advantage, not a cost center

- Celebrate failures as learning opportunities

- Encourage cross-functional collaboration

- View AI as augmenting human capability, not replacing it

- Invest in continuous learning

Organizations with weak adoption mindsets:

- Fear job displacement

- Expect AI to solve problems without organizational change

- Resist sharing data across departments

- Treat AI as an IT-only issue

This is deeply cultural and requires leadership commitment to shift.

Scoring Guide:

- 1: Fear or skepticism dominates; resistance to change

- 2: Limited enthusiasm; pockets of interest

- 3: Moderate adoption; some champions; some resistance

- 4: Positive overall sentiment; active sponsors

- 5: Strong enthusiasm; top leadership commitment; continuous promotion

Innovation Culture

Can your organization experiment quickly, learn from failures, and iterate?

Indicators of strong innovation culture:

- Structured but rapid experimentation (weekly or monthly iterations)

- Psychological safety to try new approaches and fail

- Time allocation for exploration (not just execution)

- Cross-functional teams with autonomy

- Clear metrics to measure success or failure

Bureaucratic organizations struggle here. Even with great AI talent, they can't move fast enough to extract value.

Scoring Guide:

- 1: Risk averse; long approval cycles; few experiments

- 2: Some experiments; slow decision-making

- 3: Moderate experimentation; reasonable speed

- 4: Active innovation; rapid iteration; clear metrics

- 5: Highly innovative; fast cycles; embedded experimentation

Risk Tolerance & Experimentation

AI projects are inherently uncertain. You won't know if an approach works until you try it. Organizations with low risk tolerance often get stuck in planning mode.

Assess your organization's ability to:

- Make decisions with incomplete information

- Allocate budget for exploratory projects with uncertain ROI

- Support teams that take calculated risks

- Learn from failures without blame culture

- Balance innovation with operational stability

This doesn't mean recklessness. It means informed risk-taking with clear guardrails.

Scoring Guide:

- 1: Risk averse; extensive upfront analysis; slow launches

- 2: Conservative; limited tolerance for uncertainty

- 3: Balanced; reasonable risk appetite

- 4: Comfortable with calculated risk; good judgment

- 5: Entrepreneurial mindset; smart risk-taking; continuous learning

Scoring Your AI Readiness

Each of the 12 sub-criteria can be scored from 1 to 5. Here's how to interpret your total score:

Scoring Breakdown:

- 4-8 points: Early Stage — You have foundational interest but significant gaps. Start with data governance and building a core AI team. Expect 12-18 months before you're ready for production systems.

- 9-14 points: Developing — You have momentum but need to address specific gaps. Invest in infrastructure, ML Ops tooling, and culture change. You can pilot systems now and move to production in 6-12 months.

- 15-20 points: AI-Ready — You're prepared for serious AI investment. You can move quickly from concept to production. Focus on scaling and expanding AI use cases across the business.

How to Conduct Your Assessment

Step 1: Assemble Your Team

Bring together representatives from:

- Data and analytics

- Engineering and infrastructure

- Business/product

- Leadership

- HR (for talent dimension)

This diversity ensures you're evaluating readiness honestly, not through a single department's lens.

Step 2: Score Each Sub-Criterion

For each of the 12 criteria, discuss honestly where your organization stands. Use the scoring guides above. If you disagree, that's information—it usually reveals gaps in how different parts of the organization perceive readiness.

Step 3: Identify Your Lowest-Scoring Dimension

Your weakest dimension should be your first priority. If data governance is 1 but culture is 4, you can't build on that culture until you fix data.

Step 4: Build a 6-Month Roadmap

For your lowest-scoring dimension, outline specific actions:

- What needs to change? (Current state)

- Why? (Business impact)

- Who owns it? (Clear accountability)

- What's the measure of success? (Specific metrics)

- By when? (Realistic timeline)

Step 5: Re-Assess Every 6 Months

AI readiness isn't static. Re-run this assessment twice a year. Track progress, celebrate wins, and adjust priorities as the landscape changes.

Common Patterns We See

The "Data-Rich, Talent-Poor" Organization: Has excellent data infrastructure but can't find or retain AI talent. Solution: Partner with external AI services, build internal mentoring, create a compelling mission.

The "AI-Curious-But-Unaligned" Organization: Has talented data scientists but lacks clear business problems to solve and executive sponsorship. Solution: Run structured innovation sprints, tie AI initiatives to business metrics, secure leadership buy-in.

The "Infrastructure-First" Organization: Builds sophisticated ML Ops before validating a single AI idea. Solution: Reduce scope initially, prove value on one use case, then invest heavily in infrastructure.

The "All-In" Organization: Ready across all dimensions and moves aggressively. These organizations become industry leaders in AI. They also fail spectacularly sometimes—the key is learning velocity, not success rate.

Next Steps

Once you've assessed your organization:

- Share findings with leadership. This assessment is a tool for conversation, not judgment.

- Identify your highest-impact quick wins. Which readiness gaps can you address fastest?

- Consider external partnership. If multiple dimensions are weak, partnering with an experienced AI consulting firm can accelerate progress.

At Digital Colliers, we've guided dozens of European B2B organizations through this assessment and the transformation that follows. We help you clarify your AI readiness, build a realistic roadmap, and execute on it with your team.

Learn more about how we support AI readiness and implementation for organizations across Europe.

FAQ

Q: How long does an AI readiness assessment take? A: A thorough assessment with team discussion takes 2-4 weeks. You can get a preliminary score in a workshop or two, but real clarity comes from reflection and data gathering.

Q: What if we score poorly—is it too late to start with AI? A: No. The assessment's purpose is to reveal starting points, not to judge readiness as good or bad. Early-stage scores (4-8) mean you need a different approach: start with a specific problem, build capability as you go, and don't try to boil the ocean.

Q: Do we need to score 15+ before starting any AI project? A: Not necessarily. Many organizations start with pilot projects before reaching full AI readiness. But understand that lower readiness scores mean slower delivery, higher costs, and higher failure risk. Plan accordingly.

Q: How does this framework apply to AI adoption in specific departments? A: You can run this assessment at department level, division level, or enterprise level. Department-level assessments often reveal surprising gaps that hold back company-wide progress.

Q: What's the most common readiness bottleneck you see? A: Talent and culture, especially in Europe where AI skills are scarce. Data quality is second. Infrastructure is rarely the blocker—cloud platforms solved that. It's almost always people and process.

Q: Should we hire consultants to run this assessment, or do it ourselves? A: You can do it internally with honesty and cross-functional input. External consultants add value by benchmarking against other organizations, bringing frameworks and experience, and validating findings when there's internal disagreement. Consider a hybrid: run it internally first, then validate with an external perspective.