Generative AI Consulting: What Businesses Need to Know in 2026

Generative AI hype peaked somewhere between ChatGPT's 100 million users and the tenth article declaring that generative AI will automate 300 million jobs by 2027. Yet the hype masks a real question: How do we actually deploy generative AI to create business value?

Most organizations have experimented with ChatGPT. Marketing teams use it for brainstorming. Engineers ask it for code snippets. Support teams explore AI chatbots. But experiments are not deployments. Deployments require architecture decisions, governance, integration with systems, fine-tuning to your data, and compliance—especially in the EU.

Generative AI consulting is helping organizations move from ChatGPT experiments to production systems that create measurable value while mitigating risk, cost, and regulatory exposure.

This guide covers the real use cases where generative AI delivers ROI, how to evaluate your organization's readiness, the build-vs-buy-vs-fine-tune decision framework, and how emerging EU AI Act rules reshape generative AI deployment. ai-consulting

What Is Generative AI and Why It's Different

Generative AI creates new content: text, images, code, video. Large Language Models (LLMs) like GPT-4, Claude, and Llama are the core technology.

Why it's different from traditional AI:

- Traditional AI analyzes existing data (classification, prediction, optimization). Requires labeled training data. High accuracy bar. Examples: fraud detection, churn prediction, medical imaging.

- Generative AI creates novel outputs from minimal input. Requires huge pre-trained models. Accuracy is fuzzy—outputs are probabilistic. Examples: content writing, code generation, customer service chatbots.

Key capabilities of modern LLMs:

- Language understanding: Comprehending context, nuance, intent

- Reasoning: Multi-step problem solving

- Code generation: Writing working code from natural language description

- Summarization: Distilling long documents to key points

- Translation: High-quality translation across languages

- Conversation: Natural back-and-forth dialogue

- Instruction following: Executing complex multi-step requests

The gap between ChatGPT and enterprise AI consulting is the gap between "I asked AI to write my email" and "Our organization deployed generative AI to reduce customer support costs by 30% while maintaining SLA compliance."

Where Generative AI Creates Real Business Value

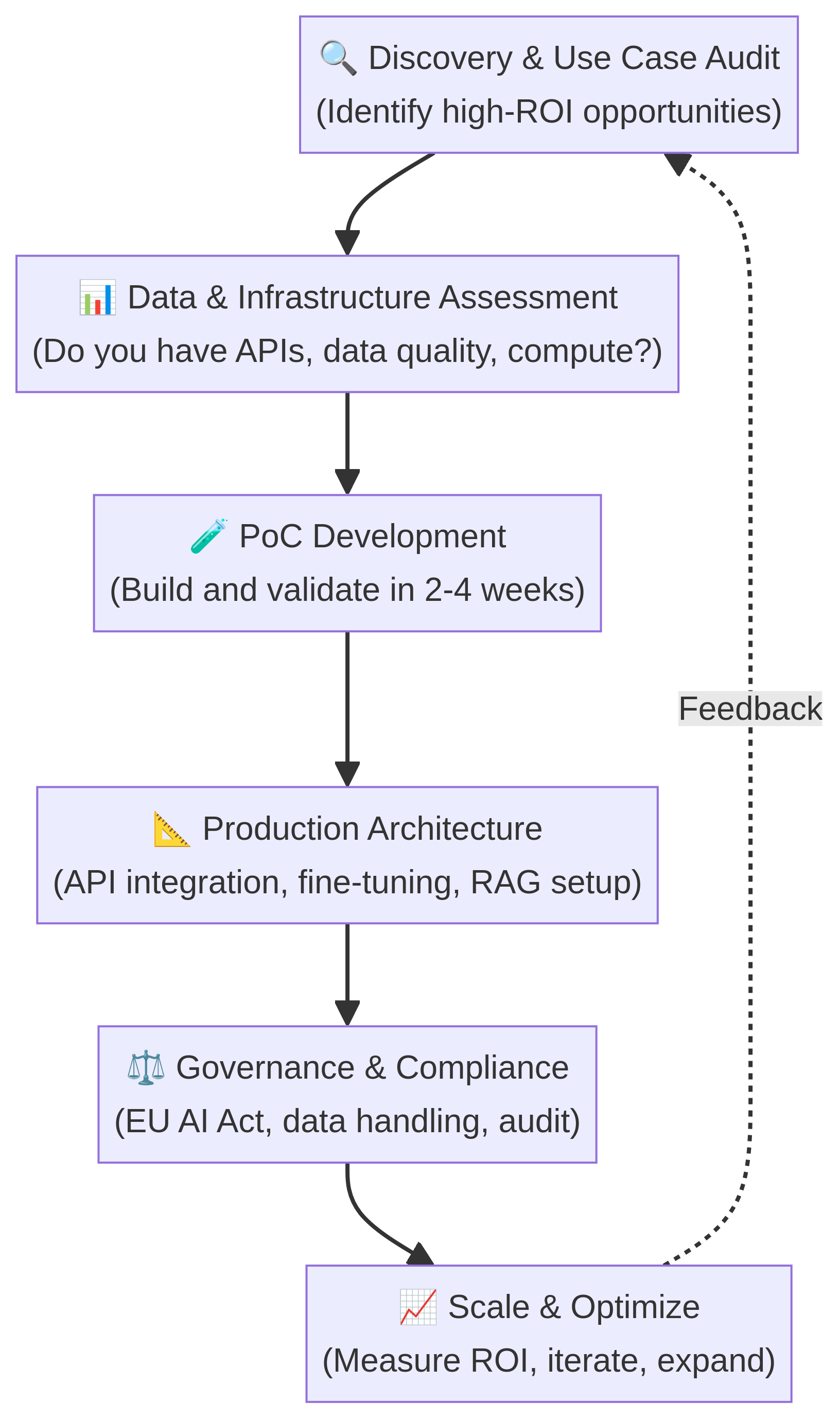

Here's the engagement flowchart showing how organizations typically explore generative AI:

Not all organizations are at the same stage. But the sequence is consistent.

Use Case 1: Customer Support and Service Automation

Problem: Support teams field repetitive questions (password reset, billing, returns, FAQ). Each ticket costs $5–$15 to handle. Volume is 10,000+ tickets/month.

GenAI Solution: Deploy an LLM-powered chatbot that handles 40–60% of tickets independently (password reset, order tracking, returns initiation). Complex issues route to humans.

Implementation:

- Use commercial API (OpenAI, Anthropic, Google) + your company knowledge base

- Or: Fine-tune open-source model (Llama) on your past support interactions

- Or: Implement RAG (Retrieval-Augmented Generation): chatbot queries your knowledge base + retrieves relevant docs before answering

ROI:

- Reduce support cost by 20–30% (fewer human agents needed)

- Improve CSAT by 10–15% (faster response time, 24/7 availability)

- Payback: 2–4 months for mid-market

Real example: A European SaaS company with 2,000 support tickets/month deployed AI chatbot. Resolved 50% of tickets without human. Freed support team for complex issues. Saved $500K annually. Cost to implement: $150K.

Use Case 2: Content Generation and Marketing

Problem: Marketing needs blog posts, email copy, social content. Hiring freelance writers costs $5K–$20K per article. Time-to-publish is 2–4 weeks. Scaling content is expensive.

GenAI Solution:

- Generate blog outlines, first drafts, email subject lines using LLMs

- Humans edit, fact-check, and finalize (quality control)

- Result: 70% faster time-to-publish, 50% lower cost

Implementation:

- Start with commercial API (ChatGPT, Claude, Gemini) for experimentation

- Move to fine-tuned model if you need consistent brand voice/style

- Automate workflow: Marketer types brief → AI generates 5 subject lines → Marketer selects → AI drafts email → Editor reviews → Send

ROI:

- 50–60% reduction in time-to-publish

- 40–50% reduction in freelance writing cost

- Publish 4x more content with same budget

Pitfall: AI-generated content that reads like AI (hollow, clichéd, inaccurate) damages brand. Human review is non-negotiable.

Use Case 3: Code Generation and Software Development

Problem: Developers spend 20–30% of time on boilerplate code, bug fixes, and refactoring. Hiring senior engineers is expensive and slow.

GenAI Solution: AI pair programmer (GitHub Copilot, Claude, etc.) generates code suggestions, functions, tests.

Impact:

- 30–50% reduction in development time for common tasks

- Developers focus on architecture, design, complex logic

- Junior developers ramp faster with AI scaffolding

Implementation:

- GitHub Copilot integrates directly into IDE

- Or: Prompt commercial LLM APIs with code context

- Workflow: Dev writes function signature → AI suggests implementation → Dev reviews/modifies → Tests run

ROI:

- 20–30% faster sprint velocity

- Fewer bugs (AI generates unit tests)

- Faster onboarding for junior developers

Real example: A Polish software firm with 20 developers deployed Copilot. Measured 25% velocity gain. Reduced onboarding time from 12 weeks to 8 weeks.

Use Case 4: Document Processing and Data Extraction

Problem: Finance teams review contracts, extract key terms (dates, amounts, obligations). Legal teams triage incoming documents. Insurance companies process claims. All involve manual document analysis.

GenAI Solution: LLMs extract structured data from unstructured documents. "Extract from this contract: signature date, payment terms, liability cap, renewal clause."

Implementation:

- Use document-focused fine-tuned models (not vanilla ChatGPT)

- Combine with RAG: for each document query, retrieve similar past documents to provide context

- Implement human review loop: AI extracts, human verifies, feedback retrains model

ROI:

- 60–80% reduction in manual document review time

- 5–10% improvement in accuracy (fewer missed clauses/data)

- Payback: 3–6 months

Use Case 5: Research and Knowledge Synthesis

Problem: Teams need to synthesize information from 100+ documents, research reports, customer interviews. Humans spend weeks distilling insights.

GenAI Solution: Feed documents to LLM with instruction: "Summarize these 50 customer reviews. What are the top 5 pain points? Group by priority."

Implementation:

- Use RAG to handle large document sets (LLM can't fit all in context)

- Or: Use commercial API's batch processing for cost efficiency

- Workflow: Upload documents → AI summarizes with specific questions → Human reviews and adds context

ROI:

- 70–80% reduction in research synthesis time

- Better insights (LLM doesn't miss nuance)

- Faster decision-making

The ROI Calculation: When GenAI Makes Financial Sense

Not every use case is ROI-positive. Here's the math:

GenAI ROI = (Labor Cost Saved + Efficiency Gain) - (Implementation Cost + Ongoing Cost)

Labor Cost Saved: If an LLM can do 50% of a $80K/year employee's work, labor savings = $40K.

Efficiency Gain: If content team publishes 4x more content with same budget, the incremental revenue from that content is upside.

Implementation Cost: $50K–$200K (setup, fine-tuning, integration, compliance).

Ongoing Cost: $2K–$10K/month (API costs, maintenance, monitoring).

Example 1: Customer Support Chatbot

- Current support cost: $100K/month (20 agents, fully loaded)

- AI handles 50% of tickets (freeing 10 agents)

- Labor savings: $50K/month

- Implementation: $200K (one-time)

- Ongoing cost: $5K/month (API + monitoring)

- Monthly ROI: $50K - $5K = $45K

- Payback: 4.4 months

Example 2: Content Generation

- Current freelance writing: $100K/year for 24 blog posts

- AI reduces time-to-write by 60%, publish 4x more

- Cost per piece drops from $4,166 to $1,667

- Cost savings: $50K/year

- Revenue upside from 4x more content: $200K/year (if properly marketed)

- Implementation: $50K

- Ongoing cost: $2K/month

- First-year ROI: ($50K + $200K) - $50K - $24K = $176K

- Payback: 2.1 months

Example 3: Senior Engineer Replacement (false hope)

- "GenAI will replace my senior engineers"

- Reality: AI generates boilerplate code, not architecture decisions

- Actual ROI: 25–30% velocity gain, not 100% replacement

- Don't expect GenAI to eliminate senior roles; expect to do more with fewer mid-level hires

Build vs. Buy vs. Fine-Tune: The Decision Framework

Every organization asks the same question: "Should we use ChatGPT, or build our own model?"

Here's the decision tree:

Option 1: Use Commercial LLM API (ChatGPT, Claude, Gemini)

When:

- You don't have proprietary data requiring secrecy

- Speed-to-market is critical (you need value in weeks, not months)

- Your use case doesn't require specialized domain knowledge

Pros:

- Fastest to value (1–2 weeks to first deployment)

- Lowest upfront cost ($0–$50K)

- No model training required

- Automatic updates from vendor (new capabilities)

Cons:

- Your data goes to external vendor (privacy concern)

- Vendor can change API terms, pricing, capabilities

- May not match your specific domain (legal, medical, financial jargon)

- Per-call costs add up at massive scale (1B calls/month)

Cost: $0.01–$0.10 per API call depending on model. For 10K support tickets/month, $100–$1,000/month.

Option 2: Fine-Tune an Open-Source Model (Llama, Mistral, etc.)

When:

- You have proprietary data you won't share with vendor

- You need specialized domain performance (your data + model training)

- You're handling sensitive information (patient, legal, financial)

- Scale justifies training cost (100M+ predictions/month)

Pros:

- Full data privacy (runs on your servers)

- Customizable to your domain/style

- No per-call vendor pricing

- Long-term cost-effective at scale

Cons:

- Requires ML engineering (4–6 person-months)

- Upfront cost: $200K–$500K

- Ongoing maintenance: model retraining, serving infrastructure

- Performance may be 5–15% lower than commercial models

Cost: $200K–$500K implementation + $50K–$200K/year infrastructure.

Option 3: Hybrid: Use Commercial API for Experimentation, Fine-Tune for Production

When: (Recommended for most enterprises)

- Experiment with ChatGPT to prove value

- Once use case is proven, fine-tune open model for production

- Use API for lower-priority tasks; fine-tuned model for core workflows

Workflow:

- Weeks 1–4: Proof-of-concept with ChatGPT API

- Weeks 5–8: Collect real data, prepare training set

- Weeks 9–20: Fine-tune Llama or Mistral model

- Week 21+: A/B test fine-tuned vs. ChatGPT, migrate to fine-tuned if better

Generative AI Readiness Assessment

Not every organization is ready for generative AI. Before investing, assess your readiness across five dimensions:

1. Data Readiness

Question: Do you have clean, accessible data to fine-tune or augment LLM performance?

Assessment:

- ✅ Excellent: Data warehouse with clean, unified datasets. API access for LLM to query.

- ✅ Good: Structured data in multiple systems with documented schemas.

- ⚠️ Moderate: Data scattered across systems, some quality issues, manual export required.

- ❌ Poor: Data in spreadsheets, inconsistent, no central repository.

Recommendation:

- Excellent/Good: Ready for advanced GenAI (fine-tuning, RAG)

- Moderate: Can start with APIs; plan data consolidation

- Poor: Invest 2–3 months in data cleaning before GenAI

2. Infrastructure and Technical Readiness

Question: Can you deploy and run LLM-powered systems?

Assessment:

- ✅ Cloud-native: Runs on AWS/Azure/GCP, APIs, microservices. Can scale compute on demand.

- ✅ Mature on-premise: Has Kubernetes or virtualization. Can run models on GPUs.

- ⚠️ Legacy systems: Monolithic architecture, manual scaling, limited cloud integration.

- ❌ Monolithic on-premise: No API layer, manual scaling, resistance to cloud.

Recommendation:

- Cloud-native/Mature: Ready for production GenAI

- Legacy: Plan 2–3 month modernization; start with cloud-hosted APIs

- Monolithic: Quick wins with APIs; plan long-term infrastructure modernization

3. Governance and Risk Maturity

Question: Does your organization have frameworks for managing AI risk, data governance, audit trails?

Assessment:

- ✅ Advanced: Data governance board, AI governance policy, compliance framework for GDPR/AI Act.

- ✅ Intermediate: Data access controls, some audit logging, privacy by design.

- ⚠️ Basic: Some access controls, limited audit trails, no formal governance.

- ❌ None: No governance, access controls, or audit framework.

Recommendation:

- Advanced/Intermediate: Ready for regulated/high-risk GenAI use cases

- Basic: Restrict to low-risk applications; build governance before scaling

- None: Can experiment with ChatGPT; must establish governance before production

4. Budget and Timeline Readiness

Question: Can you fund GenAI initiatives and commit to 3–6 month timelines?

Assessment:

- ✅ Ready: $500K–$2M annual budget, willing to invest for 6+ months

- ✅ Moderate: $100K–$500K, willing to invest for 3–6 months

- ⚠️ Limited: $20K–$100K, expecting ROI in weeks

- ❌ Not ready: < $20K or expecting overnight transformation

Recommendation:

- Ready/Moderate: Launch full GenAI program

- Limited: Pilot single use case (support or content), prove ROI before scaling

- Not ready: Experiment with free ChatGPT for 2–3 months, build internal buy-in

5. Organizational Change Readiness

Question: Are teams prepared for AI-augmented workflows?

Assessment:

- ✅ Ready: Business leadership champions AI, teams actively request AI tools, change management experience.

- ✅ Moderate: Some skepticism, gradual adoption, training needed.

- ⚠️ Reluctant: "AI will replace us" fears, no executive champion, resistance to change.

- ❌ Not ready: Active resistance, no executive support, "we do things the old way" culture.

Recommendation:

- Ready/Moderate: Launch GenAI program with training and change management

- Reluctant: Start with visible, non-threatening pilots; build examples and case studies

- Not ready: Begin awareness campaign; share external examples; get executive champion first

EU AI Act and Generative AI: What's Changing

The EU AI Act, enforced from late 2025 onwards, reshapes how generative AI can be deployed in Europe.

Risk Classification for Generative AI

Prohibited Risk (Banned):

- AI systems designed to manipulate human behavior to harm

- Social credit scoring without human review

Generative AI itself is not prohibited, but specific harmful use cases are.

High-Risk AI (Strict Requirements):

- AI used for hiring decisions

- AI used for loan approvals

- AI used in law enforcement

- AI used for educational assessment

- AI used for critical infrastructure

If you fine-tune an LLM for hiring recommendations, it's high-risk. Requirements:

- Human-in-the-loop review (AI recommends; human decides)

- Explainability (ability to explain why AI recommended candidate A)

- Bias monitoring (measure performance across demographic groups)

- Data provenance (document training data sources)

Limited-Risk AI (Transparency Requirements):

- Chatbots, virtual assistants

- AI-generated content (deepfakes require disclosure)

If you deploy a customer service chatbot, users should know they're talking to AI. If you generate AI-written content, you should disclose it.

General-Purpose AI (Light-Touch Governance):

- Foundation models that can be used for many purposes

- Minimal EU requirements IF used responsibly

Implications for Organizations

1. Fine-Tuned Models for High-Risk Use Cases Need Documentation If you fine-tune Llama for recruitment, you must document:

- Training data sources and composition

- Performance across demographic groups (male/female, age, ethnicity if available)

- Testing and validation process

- Human review process

2. Transparency for Customer-Facing AI If your chatbot interacts with customers, you should disclose: "This response was generated by AI. Please verify important information."

3. Data Retention and Deletion Under GDPR + AI Act, you must:

- Delete personal training data on user request

- Retain audit trails for legal defense (documentation of decisions)

- Document any data used to train models

4. Third-Party Vendor Responsibility If you use a SaaS platform (e.g., "AI customer support"), you're responsible for ensuring the vendor complies with AI Act. Request their documentation of fairness testing, bias monitoring, etc.

Practical Roadmap for EU AI Act Compliance

Now (Late 2025):

- Audit your generative AI use cases. Classify by risk.

- For high-risk: Start bias testing, documentation, human review process

- For limited-risk: Add transparency notices to AI-generated content

2026–2027:

- Implement formal governance (AI governance board, policy framework)

- For high-risk: Complete human-in-the-loop workflows and explainability

- For all: Maintain audit trails and documentation

2027+:

- Compliance is mandatory. No exemptions.

Generative AI Consulting: What We Help With

Generative AI consulting is helping organizations navigate the hype, identify genuine ROI, and deploy responsibly.

Common projects we do:

1. Use Case Discovery (4 weeks)

- Audit your organization for generative AI opportunities

- Identify 5–10 high-ROI use cases

- Estimate labor savings, implementation cost, payback timeline

- Prioritize for pilot projects

2. Proof-of-Concept (4–8 weeks)

- Build a PoC using commercial APIs

- Measure accuracy, user satisfaction, labor time reduction

- Document findings and business case

- Decision: Proceed to production or pivot

3. Production Deployment (8–16 weeks)

- Architecture design (API vs. fine-tuned model)

- Data preparation and fine-tuning (if applicable)

- Integration with existing systems

- Governance and compliance framework

- Training and change management

4. EU AI Act Compliance (Ongoing)

- Risk classification for your AI systems

- Bias testing and documentation

- Human review process design

- Audit trail and explainability setup

Frequently Asked Questions

Q: Will generative AI replace my team? A: Generative AI augments teams, not replaces them. Customer support agents become "AI quality reviewers." Engineers become "AI code auditors." Writers become "AI editors." Job content changes; total headcount may drop 20–30%, but expertise becomes more valuable.

Q: Should we use ChatGPT or train our own model? A: Start with ChatGPT for speed and cost. Once you prove value and have volume/privacy needs, evaluate fine-tuning. Most organizations hybrid: ChatGPT for experiments, fine-tuned model for core workflows.

Q: How do we ensure AI-generated content is accurate? A: LLMs hallucinate. They generate plausible-sounding but false information. Always have humans review critical content. For customer support, implement feedback loops: customers flag incorrect answers, you retrain model. For marketing/content, editorial review is non-negotiable.

Q: Is generative AI legal in the EU? A: Yes, but regulated. General use is permitted. High-risk applications require governance (human oversight, bias testing). Transparency required for limited-risk applications. No prohibition on generative AI itself.

Q: What's the difference between generative AI and regular AI? A: Regular AI (machine learning) classifies or predicts. Generative AI creates novel content. Regular AI says "this email is spam"; GenAI writes a customer email. Both are AI; different purposes and risk profiles.

Q: How do we prevent bias in generative AI? A: Test the model on diverse examples. If fine-tuning, audit training data for representation. Monitor real-world predictions for bias drift. Document fairness tests. For high-risk applications (hiring, credit), conduct formal bias audits and maintain human review.

Q: Can we fine-tune an LLM on our proprietary data? A: Yes, if you handle data securely and with proper governance. For sensitive data (customer, financial, patient), on-premise fine-tuning or private cloud fine-tuning (not public APIs). Ensure DPA with vendors.

Start Your Generative AI Journey

Generative AI is not a phase. It's reshaping how knowledge work is done. Organizations that master it—identifying real use cases, deploying responsibly, maintaining human oversight—will gain significant competitive advantage.

The key is moving from experimentation to systematic deployment. From "What can ChatGPT do?" to "How does generative AI create measurable business value for us?"

Digital Colliers specializes in generative AI consulting—from discovery and PoC through production deployment and EU AI Act compliance. We've helped dozens of European organizations identify high-ROI GenAI use cases, build production systems, and navigate regulatory requirements.

If you're ready to move generative AI beyond ChatGPT experiments to systematic business value, let's talk. We'll assess your readiness, identify your best opportunities, and build a roadmap to production.

Schedule a discovery call today. Let's explore where generative AI creates the most impact for your organization.