RAG Implementation Guide: Building Retrieval-Augmented Generation Systems

Large language models (LLMs) are powerful, but they have a critical limitation: they only know what was in their training data. Ask ChatGPT about your company's latest product roadmap or proprietary research, and it will confabulate—generating plausible but often false answers.

Retrieval-Augmented Generation (RAG) solves this problem. RAG systems combine the reasoning capability of LLMs with access to your private data, creating AI assistants that can answer questions about your company's documents, policies, customer data, and operational context.

This guide walks you through building a production-grade RAG system. We'll cover the architecture, each stage of the pipeline, technology choices, and implementation best practices.

For a deeper dive into AI implementation for your organization, explore our AI implementation services to see how we help enterprise teams deploy AI systems that drive real business value.

What Is RAG and Why It Matters

RAG stands for Retrieval-Augmented Generation. The idea is simple but powerful:

- When a user asks a question, retrieve relevant documents or data from your knowledge base

- Augment the LLM's prompt with that retrieved context

- Generate an answer that's grounded in your actual data, not hallucinated

This matters because:

Hallucination Problem: LLMs confidently generate false information. RAG reduces hallucinations by grounding responses in actual sources.

Knowledge Currency: Training data for public LLMs is months or years old. RAG lets you build on live, current information.

Proprietary Context: LLMs can't know your company's specific data—your customer list, product docs, internal policies, research. RAG makes this accessible to AI assistants.

Accountability: When your AI assistant says something, you can trace it back to the source document. This is critical for regulated industries (finance, healthcare, legal).

Cost: You don't need to fine-tune a massive model. RAG works with existing LLMs, reducing infrastructure costs.

The RAG Architecture Pipeline

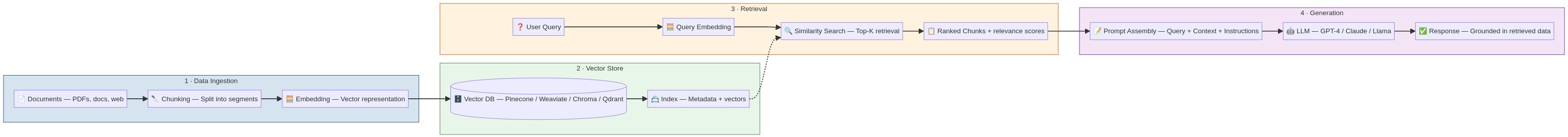

The RAG pipeline has four stages: Data Ingestion (documents to embeddings), Vector Store (storage and indexing), Retrieval (finding relevant context), and Generation (creating the response).

RAG is a four-stage pipeline. Let's walk through each:

Stage 1: Data Ingestion

Data ingestion is where you prepare your knowledge base for RAG. This stage has three steps:

Step 1A: Document Collection and Preparation

Start by identifying what documents or data sources should be accessible to your RAG system.

Common sources:

- Internal documentation (product docs, API references, user guides)

- Policy and compliance documents

- Research papers, whitepapers, technical reports

- Customer support tickets and FAQs

- Email archives or communication logs

- Database records (structured data converted to text)

- Web pages from your website or internal wiki

Preparation work:

- Export documents in consistent formats (PDF, HTML, plain text)

- Remove duplicate content (your system will waste vector space on duplicates)

- Clean up formatting and encoding issues

- Remove highly sensitive information (credentials, private keys, confidential data)

- Add metadata (document title, date, source, author, category) for filtering later

This step is often underestimated. Garbage in, garbage out. If your documents are poorly formatted, redundant, or out-of-date, your RAG system will inherit those problems.

Step 1B: Chunking

LLMs have context windows (token limits). You can't feed an entire 500-page manual into an LLM's prompt. You need to split documents into smaller chunks that fit within the context window while maintaining semantic coherence.

Chunking strategies:

Fixed-size chunks: Split documents into chunks of N tokens (e.g., 512 tokens). Simple but can break semantic units awkwardly (splits a sentence in the middle).

Semantic chunking: Use NLP to identify natural boundaries (paragraphs, sections, sentences) and chunk along those. Better quality but more complex.

Recursive chunking: Split by semantic boundaries (sections, paragraphs), then fall back to fixed size if chunks are too large. Best balance of quality and simplicity.

Metadata-aware chunking: If documents have clear structure (headers, hierarchies), chunk along those boundaries and preserve hierarchy as metadata.

Rule-based chunking: For specific document types (contracts, research papers), write rules that capture domain-specific structure.

Example: A product documentation has:

# Getting Started

## Installation

### Mac

### Windows

## Configuration

Semantic chunking would create separate chunks for "Installation > Mac" and "Installation > Windows" rather than combining them into one giant "Installation" chunk.

Chunk size considerations:

- Too small (50 tokens): Loses context; generates many chunks; slower retrieval

- Too large (2000+ tokens): Overkill; wastes embedding space; less precise retrieval

- Sweet spot: 256-512 tokens per chunk for most use cases

Chunk overlap: Include 10-20% overlap between chunks (e.g., last 50 tokens of one chunk are the first 50 of the next). This preserves context at boundaries and helps retrieval when a question spans two chunks.

Step 1C: Embedding

Once you have clean chunks, convert each chunk into a numerical representation called an embedding. Embeddings capture the semantic meaning of text in a high-dimensional space. Similar chunks have similar embeddings.

How embeddings work:

- A text chunk is converted to a vector of numbers (typically 768-1536 dimensions)

- Semantically similar chunks produce similar vectors

- You can measure similarity with mathematical operations (cosine similarity, Euclidean distance)

Embedding models:

Popular open-source models:

- all-MiniLM-L6-v2: Fast, 384 dimensions, good for most use cases

- BGE-base-en-v1.5: 768 dimensions, high quality, slower

- e5-large-v2: 1024 dimensions, very high quality for semantic search

- Llama2 embeddings: If you want to stay in the open-source ecosystem

Commercial APIs:

- OpenAI's text-embedding-3-large: High quality, $0.13 per 1M tokens

- Cohere's embed-english-v3.0: High quality, competitive pricing

- Azure OpenAI: Same as OpenAI but through Azure infrastructure

Embedding cost trade-offs:

- Larger models (1024+ dims) are more accurate but slower and more storage

- Smaller models (384 dims) are fast but less nuanced

- Open-source models are free but require infrastructure

- Commercial APIs cost per-token but handle scale automatically

Pro tip: You can use different embedding models for different content types. For instance, use a domain-specific legal embedding model for contracts, general-purpose embeddings for everything else. This maximizes quality without overspending.

Stage 2: Vector Store (Storage and Indexing)

After embedding your chunks, you need a database that can store embeddings and find similar ones quickly. This is where vector databases come in.

Vector Database Technologies

Managed vector databases (easiest to use):

- Pinecone: Fully managed, auto-scaling, serverless. $0.40 per million vectors monthly. Best for most projects.

- Weaviate Cloud: Open-source, managed SaaS option. Pay-as-you-go. Good for flexibility.

- Supabase (pgvector): Postgres with vector extension. If you already use Postgres, cheaper than separate database.

- Qdrant Cloud: Managed, high-performance, open-source alternative. Competitive pricing.

Self-hosted vector databases (more control, more ops):

- Milvus: Open-source, high-performance, can handle billions of vectors

- Weaviate (self-hosted): Same features as cloud version, you manage infrastructure

- Qdrant (self-hosted): Same as cloud version, self-managed

- FAISS (Facebook AI Similarity Search): Research library, not production database, but useful for prototyping

Postgres extensions (if you want to consolidate databases):

- pgvector: Standard extension; built into RDS

- Powers millions of vector queries yearly now

Choose based on:

- Scale: Pinecone handles scale automatically; self-hosted requires ops investment

- Cost: Self-hosted is cheaper at scale (10M+ vectors); Pinecone is cheaper at small scale

- Features: Do you need metadata filtering? Hybrid search (vector + keyword)? Real-time updates?

- Integration: Does it plug into your existing stack easily?

Storage and Indexing Strategy

When you insert embeddings into a vector database, the database indexes them for fast retrieval. Understanding indexing helps you optimize performance.

Indexing methods:

Flat (brute-force search): Every query compares against every embedding. Accurate but slow for large datasets. Fine for <100k vectors.

HNSW (Hierarchical Navigable Small World): Creates a graph structure for fast approximate similarity search. Default for most databases. Fast even for millions of vectors.

IVF (Inverted File Index): Clusters vectors and searches only relevant clusters. Fast and memory-efficient. Good for very large datasets.

PQ (Product Quantization): Compresses vectors to save space while maintaining approximate similarity. Used when storage is a constraint.

Most vector databases auto-choose the best method for your scale. For RAG projects, HNSW usually works well.

Metadata Storage

Store metadata (document title, source, date, category, chunk position) alongside embeddings. This lets you:

- Filter results by source or category

- Trace answers back to original documents

- Re-rank results based on metadata

- Provide attribution

Example metadata:

{

"document_id": "doc_12345",

"chunk_id": 7,

"document_title": "API Reference v2.3",

"source_url": "https://docs.example.com/api",

"date_updated": "2026-03-15",

"category": "technical",

"section": "Authentication",

"word_count": 287

}

Stage 3: Retrieval (Finding Relevant Context)

When a user asks a question, you need to find the most relevant chunks from your vector database. This is where retrieval happens.

Step 3A: Query Embedding

Convert the user's question into an embedding using the same embedding model you used for the chunks. If you used all-MiniLM-L6-v2 for chunks, use it for queries too. Mismatched models tank retrieval quality.

Step 3B: Similarity Search

Find the K most similar embeddings to your query embedding. "Similar" means closest in vector space, measured by cosine similarity or Euclidean distance.

Key parameters:

- K (number of results): Usually 3-10. Retrieving too many chunks wastes LLM context; too few misses relevant information.

- Similarity threshold: Sometimes require a minimum similarity score (e.g., cosine > 0.7) to avoid irrelevant results.

- Filters: Restrict search to specific documents, dates, or categories. Critical for multi-tenant systems.

Step 3C: Re-Ranking (Optional but Recommended)

Similarity search isn't perfect. A second pass with a cross-encoder model can re-rank results by actual relevance rather than embedding similarity.

Without re-ranking: Top-K embeddings by vector similarity are passed to the LLM.

With re-ranking: Top-K embeddings are scored by a cross-encoder (a smaller model that directly scores question-document pairs), re-ranked, and passed to the LLM.

This adds latency (100-500ms for re-ranking 50 chunks) but significantly improves quality. Recommended if you're passing more than 5 chunks to the LLM.

Libraries:

- Sentence Transformers (Python): Built-in cross-encoder models

- Cohere's rerank API: Commercial, high quality, $0.01 per 1000 tokens

Retrieval Strategies Beyond Simple Similarity

Hybrid search (vector + keyword): Combine vector similarity with keyword/full-text search. Some results are ranked by vector similarity, others by keyword matching. Merges results (by relevance or retrieval method).

Good for: When queries have specific keywords that should match exactly, or when synonyms might miss relevant documents.

Multi-query retrieval: Generate multiple versions of the user's question and retrieve results for each version. Merge results.

Example: User asks "How do I set up billing?" System generates:

- "How do I set up billing?"

- "Configure billing account"

- "Billing account setup"

- "Payment setup"

Retrieve results for all four and merge. Catches more relevant documents than a single query.

Hierarchical retrieval: If documents have a clear structure (categories, subcategories, sections), retrieve at a higher level first (category), then drill down. Faster and more accurate than flat search.

Metadata filtering with retrieval: Combine semantic search with metadata filters: "Find documents about API authentication published in the last 3 months."

This is critical for systems with large document collections where metadata provides strong signals.

Optimizing Retrieval Quality

Retrieval is the bottleneck for most RAG systems. Garbage in (bad retrieval) = garbage out (poor answers).

Optimization checklist:

- Are you using the right K value? (Typically 5-10, rarely >15)

- Are chunks the right size? (Too small loses context; too large is inefficient)

- Are you filtering by metadata to reduce noise?

- Are you re-ranking results?

- Are you monitoring retrieval quality? (Are retrieved chunks actually relevant to the query?)

- Are you handling query reformulation? (If user is asking a follow-up, are you maintaining conversation context?)

Debugging retrieval:

- Manually run queries and inspect what gets retrieved. Are the results relevant?

- If top results are bad, re-examine chunking and embedding strategies

- Try different embedding models for your specific domain

- Implement re-ranking and measure improvement

Stage 4: Generation (Creating the Response)

Once you have relevant chunks, use an LLM to generate an answer.

Step 4A: Prompt Assembly

Create a prompt that includes:

- System instructions (role, tone, constraints)

- Retrieved context (the actual chunks)

- The user's question

- Any conversation history (if multi-turn)

Example prompt:

You are a helpful assistant answering questions about our API.

Use the context provided. If the context doesn't contain relevant information,

say "I don't have that information" rather than guessing.

Always cite the source document for your answers.

CONTEXT:

{retrieved_chunks}

QUESTION:

{user_question}

ANSWER:

Prompt engineering for RAG:

- Be explicit about using context: "Use the provided context to answer"

- Tell the model to cite sources: "Always cite which document contains this information"

- Set expectations for edge cases: "If context is insufficient, say so"

- Include few-shot examples if answers need specific format

- Keep prompts concise; you're paying per token for long prompts

Step 4B: LLM Processing

Send the assembled prompt to an LLM. For RAG, you have options:

OpenAI's GPT-4 or GPT-4o:

- Highest quality answers

- ~$0.03-$0.06 per 1K tokens (input); ~$0.06-$0.15 per 1K tokens (output)

- Best for customer-facing features or high-stakes decisions

Anthropic's Claude 3:

- Competitive with GPT-4; excellent for following instructions precisely

- ~$0.003-$0.015 per 1K tokens (input); ~$0.015-$0.075 per 1K tokens (output)

- Good for retrieval and reasoning tasks

Open-source models (Llama 2, Mistral, etc.):

- Free; you pay for infrastructure

- Good quality for general tasks; weaker for complex reasoning

- Great for privacy-sensitive use cases

Smaller models (GPT-3.5, Mixtral):

- Cheaper; faster

- Good for simple fact retrieval tasks

- Not recommended for complex reasoning

For RAG specifically: The quality of your retrieved context matters more than the LLM size. A large model with bad context produces worse answers than a smaller model with excellent context. So optimize retrieval first, then choose the smallest LLM that works for your quality bar.

Step 4C: Response Generation and Streaming

Generate the answer. If latency matters (customer-facing chatbots), stream the response token-by-token to the user, improving perceived speed.

Response quality improvements:

- Have the LLM cite sources: "Based on the API documentation..."

- Include confidence levels: "I'm confident about this based on..."

- Suggest follow-up questions: "Would you also like to know about...?"

- Track response quality with user feedback (thumbs up/down, ratings)

Implementation: Building Your First RAG System

Minimal RAG System (48 hours)

If you want to build a working RAG system quickly:

- Data: Export documents (10-50 PDFs or documents)

- Embedding: Use Langchain or LlamaIndex with OpenAI embeddings (~$1-5 cost)

- Vector store: Use Pinecone free tier or local FAISS

- Retrieval: Simple similarity search, K=5

- LLM: Use OpenAI's GPT-4 or GPT-3.5

- Glue: Langchain or LlamaIndex (frameworks that wire these together)

Cost: ~$100-500 per month for embeddings and LLM calls Time to value: 2-4 weeks Limitation: No re-ranking, no hybrid search, no conversation memory

Production RAG System (4-12 weeks)

For enterprise use:

- Data pipeline: Automated document ingestion from multiple sources, versioning, quality checks

- Embedding: Domain-specific or fine-tuned embedding model

- Chunking: Sophisticated semantic chunking with metadata

- Vector store: Managed (Pinecone) or self-hosted (Milvus) at scale

- Retrieval: Hybrid search, metadata filtering, re-ranking

- LLM: Multiple models for different use cases (GPT-4 for complex, GPT-3.5 for simple)

- Conversation: Memory and context management across turns

- Feedback loop: User ratings, logging, monitoring

- Security: Authentication, access control per user, document security

Cost: $5k-$20k monthly for large-scale systems Time to value: 8-12 weeks Limitation: None significant; production-grade

Technology Stack: Recommended Components

For rapid prototyping:

- Framework: Langchain or LlamaIndex

- Embeddings: OpenAI

- Vector store: Pinecone or local FAISS

- LLM: OpenAI GPT-3.5 or GPT-4

- Orchestration: Python scripts or Flask API

For production:

- Framework: Langchain or Haystack (more enterprise-focused)

- Embeddings: Cohere, Azure OpenAI, or open-source (BGEM3)

- Vector store: Pinecone, Weaviate, or Milvus

- LLM: Multiple models (OpenAI, Anthropic, open-source)

- Orchestration: FastAPI or built-in LLM API gateways

- Monitoring: LLMOps platforms (LangSmith, Baseline, WhyLabs)

- Data pipeline: Airflow or Prefect for document ingestion

- Database: PostgreSQL with pgvector for hybrid search

Best Practices and Common Pitfalls

Best Practices

1. Start with retrieval quality Don't optimize the LLM until retrieval is working well. A good retrieval result + mediocre LLM beats perfect LLM + bad retrieval every time.

2. Monitor retrieval quality Track (in logs or metrics):

- Are retrieved chunks actually relevant?

- Do they answer the user's question?

- How often are users asking follow-ups immediately after a response?

3. Implement user feedback loops Simple thumbs up/down after responses reveals which retrieval or LLM failures matter most.

4. Update documents regularly RAG systems are only as good as their knowledge base. Stale documentation produces stale answers. Automate ingestion where possible.

5. Use conversation context If a user asks a follow-up question, include previous messages in the query. This dramatically improves relevance.

6. Cost optimize aggressively Use smaller embeddings and LLM models where they work. Every token costs money. A cheaper model with the same quality is better.

7. Test on real queries Don't judge RAG systems on synthetic queries. Test with actual customer questions, customer support tickets, or your team's real questions.

Common Pitfalls

Pitfall 1: Over-engineering retrieval You don't need the most complex hybrid search and re-ranking for most use cases. Start simple (vector similarity, K=5). Add complexity only if it's not working.

Pitfall 2: Ignoring document quality If your knowledge base has outdated, conflicting, or inaccurate information, RAG will faithfully reproduce those problems. Clean documents first.

Pitfall 3: Chunking without thinking Random chunking (splitting documents at character 512, 1024, etc.) loses semantic structure. Spend time on intelligent chunking.

Pitfall 4: Too much context to the LLM Passing 15+ chunks to the LLM costs money and dilutes signal. Use fewer, higher-quality chunks instead. Re-ranking helps here.

Pitfall 5: Forgetting to handle edge cases What happens when no relevant chunks are found? When the user asks something outside your knowledge base? Plan these cases.

Pitfall 6: Not monitoring in production RAG systems degrade over time. Documents become stale. User patterns change. Monitor continuously.

Measuring RAG System Performance

How do you know if your RAG system is working?

Automatic metrics (use as proxies):

- Retrieval precision: Of top-K retrieved chunks, what fraction are relevant? (Manually evaluate 100 queries, mark chunks relevant/irrelevant)

- Retrieval recall: Of all relevant chunks in your database, what fraction are in top-K? (Hard to measure; usually just precision suffices)

- BLEU/ROUGE scores: Similarity between generated answer and reference answer. Imperfect but fast.

User metrics (actual feedback):

- Thumbs up/down: Binary feedback on answer quality

- 5-star rating: More granular feedback

- Task completion: Did the answer actually help the user complete their task?

- Conversation length: Long conversations might indicate the user had to ask follow-ups (negative signal)

For enterprise systems, track:

- Answer latency (should be <5 seconds for interactive use)

- Cost per query (embeddings + LLM calls)

- Error rate (how often do retrieval/generation fail?)

- User satisfaction over time (trending up or down?)

Deployment Considerations

Hosting Options

Serverless (fastest to deploy):

- AWS Lambda + API Gateway

- Google Cloud Functions

- Azure Functions

- Pros: No infrastructure management; scales automatically

- Cons: Cold starts add latency; limited control

Containers (flexible):

- Docker on AWS ECS or Kubernetes

- Good balance of control and simplicity

Managed AI services:

- Azure OpenAI has built-in RAG features

- AWS Bedrock (coming soon)

- Reduced implementation overhead

Security Considerations

For systems handling sensitive documents:

- Authenticate users; check permissions per query

- Encrypt documents at rest

- Log all queries and retrieved documents for audit

- Consider on-premise deployment for regulated industries

- Don't send sensitive docs to public LLM APIs (use private/hosted models)

Multi-tenancy: If multiple customers share your RAG system:

- Isolate vector databases per customer

- Filter retrieval by customer/user permissions

- Log queries per customer for audit and billing

Costs: Real-World Examples

Small System (100 documents, 10k queries/month)

- Embeddings: ~$50/month

- Vector storage (Pinecone): ~$20/month free tier

- LLM (GPT-3.5): ~$100/month

- Total: ~$170/month

Medium System (1000 documents, 100k queries/month)

- Embeddings: ~$500/month

- Vector storage (Pinecone): ~$200/month

- LLM (GPT-3.5): ~$1000/month

- Total: ~$1700/month

Large System (100k documents, 1M queries/month)

- Embeddings: ~$5000/month

- Vector storage (self-hosted Milvus): ~$2000/month infrastructure

- LLM (GPT-3.5 or cheaper model): ~$10k/month

- Total: ~$17k/month

These estimates are raw costs. Production systems need monitoring, ops, reranking, which add 20-30%.

Getting Started

If you're ready to build a RAG system:

- Identify documents: What knowledge should be accessible to your AI system?

- Choose framework: Langchain or LlamaIndex (both solid, pick one)

- Build MVP: Use managed services (Pinecone, OpenAI) to get a prototype in days

- Test retrieval: Does it actually find relevant documents?

- Optimize LLM prompts: Make the model follow your style and cite sources

- Collect feedback: Measure what users think

- Iterate: Improve chunking, embedding, retrieval based on real data

For complex systems or multi-tenant scenarios, partner with our AI implementation team to accelerate development and avoid costly mistakes.

FAQ

Q: Is RAG the same as fine-tuning? A: No. Fine-tuning retrains the LLM on your data (expensive, slow). RAG retrieves your data at query time (cheap, fast, updatable). RAG is usually better for document-heavy use cases; fine-tuning for teaching the model a specific style or behavior.

Q: Can we use RAG with local/open-source LLMs? A: Absolutely. Llama 2, Mistral, or any open-source model works with RAG. You just need a vector database and retrieval logic. Trade-off: lower cost and privacy, but lower quality than GPT-4.

Q: How do we handle multi-turn conversations with RAG? A: Include previous messages in the context. When the user asks a follow-up, either (a) include the full conversation history in the LLM prompt, or (b) retrieve based on the entire conversation, not just the latest message. Compress conversation history if it gets long (keep only recent turns).

Q: What if relevant documents exist but retrieval doesn't find them? A: This is a retrieval failure. Debug by: (1) checking your embedding model (try a different one), (2) examining chunking (are chunks too small?), (3) using hybrid search (combine keyword + vector), (4) adjusting K (retrieve more results), (5) implementing re-ranking.

Q: How often should we update/re-embed documents? A: If documents change frequently (daily), re-embed daily. If quarterly, once per quarter. The rule: re-embed when your knowledge base significantly changes. Spot-updating (re-embedding just changed documents) is more efficient than full re-embedding.

Q: Can RAG handle real-time data (current stock prices, weather, live search results)? A: No, not directly. RAG retrieves from static vectors. For real-time data, (a) embed it before queries (fast if data changes hourly), or (b) skip RAG and call a live API, or (c) use a hybrid approach (RAG for documents, API for real-time data).

Q: What's the latency of a RAG query? A: Typical breakdown: embedding (100-200ms) + retrieval (50-200ms) + re-ranking (100-500ms if enabled) + LLM generation (1-5 seconds). Total: 1.5-6 seconds. Optimize by caching embeddings, using smaller models, and optimizing vector database queries.